Mobile Recommendation

Assistants, also known as Virtual Assistants, Intelligent Agents, or Virtual

Personal Assistants, are a collection of software features that combine a

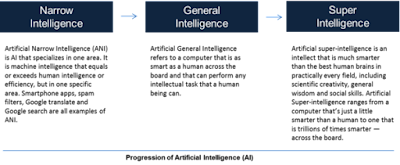

conversational user interface with artificial intelligence to act on behalf of

a user.

They may deliver what seems to a user as an agent when they

work together.

In this sense, an agent differs from a tool in that it has

the ability to act autonomously and make choices with some degree of autonomy.

Many qualities may be included into the design of mobile

suggestion helpers to improve the user's impression of agency.

Using visual avatars to represent technology, incorporating

features of personality such as humor or informal/colloquial language, giving a

voice and a legitimate name, constructing a consistent method of behaving, and

so on are examples of such tactics.

A human user can use a mobile recommendation assistant to

help them with a wide range of tasks, such as opening software applications,

answering questions, performing tasks (operating other software/hardware), or

engaging in conversational commerce or entertainment (telling stories, telling

jokes, playing games, etc.).

Apple's Siri, Baidu's Xiaodu, Amazon's Alexa, Microsoft's

Cortana, Google's Google Assistant, and Xiaomi's Xiao AI are among the mobile

voice assistants now in development, each designed for certain companies, use

cases, and user experiences.

A range of user interface modali ties are used by mobile

recommendation aides.

Some are completely text-based, and they are referred

regarded as chatbots.

Business to consumer (B2C) communication is the most common

use case for a chatbot, and notable applications include online retail

communication, insurance, banking, transportation, and restaurants.

Chatbots are increasingly being employed in medical and

psychological applications, such as assisting users with behavior modification.

Similar apps are becoming more popular in educational

settings to help students with language learning, studying, and exam

preparation.

Facebook Messenger is a prominent example of a chatbot on

social media.

While not all mobile recommendation assistants need

voice-enabled interaction as an input modality (some, such web site chatbots,

may depend entirely on text input), many contemporary examples do.

A Mobile Recommendation Assistant uses a number similar

predecessor technologies, including a voice-enabled user interface.

Early voice-enabled user interfaces were made feasible by a

command syntax that was hand-coded as a collection of rules or heuristics in

advance.

These rule-based systems allowed users to operate devices

without using their hands by delivering voice directions.

IBM produced the first voice recognition program, which was

exhibited during the 1962 World's Fair in Seattle.

The IBM Shoebox has a limited vocabulary of sixteen words

and nine numbers.

By the 1990s, IBM and Microsoft's personal computers and

software had basic speech recognition; Apple's Siri, which debuted on the

iPhone 4s in 2011, was the first mobile application of a mobile assistant.

These early voice recognition systems were disadvantaged in

comparison to conversational mobile agents in terms of user experience since

they required a user to learn and adhere to a preset command language.

The consequence of rule-based voice interaction might seem

mechanical when it comes to contributing to real humanlike conversation with

computers, which is a feature of current mobile recommendation assistants.

Instead, natural language processing (NLP) uses machine

learning and statistical inference to learn rules from enormous amounts of

linguistic data (corpora).

Decision trees and statistical modeling are used in natural

language processing machine learning to understand requests made in a variety

of ways that are typical of how people regularly communicate with one another.

Advanced agents may have the capacity to infer a user's

purpose in light of explicit preferences expressed via settings or other

inputs, such as calendar entries.

Google's Voice Assistant uses a mix of probabilistic

reasoning and natural language processing to construct a natural-sounding

dialogue, which includes conversational components such as paralanguage

("uh", "uh-huh", "ummm").

To convey knowledge and attention, modern digital assistants

use multimodal communication.

Paralanguage refers to communication components that don't

have semantic content but are nonetheless important for conveying meaning in

context.

These may be used to show purpose, collaboration in a

dialogue, or emotion.

The aspects of paralanguage utilized in Google's voice

assistant employing Duplex technology are termed vocal segre gates or speech

disfluencies; they are intended to not only make the assistant appear more

human, but also to help the dialogue "flow" by filling gaps or making

the listener feel heard.

Another key aspect of engagement is kinesics, which makes an

assistant feel more like an engaged conversation partner.

Kinesics is the use of gestures, movements, facial

expressions, and emotion to aid in the flow of communication.

The car firm NIO's virtual robot helper, Nome, is one recent

example of the application of face expression.

Nome is a digital voice assistant that sits above the

central dashboard of NIO's ES8 in a spherical shell with an LCD screen.

It can swivel its "head" automatically to attend

to various speakers and display emotions using facial expressions.

Another example is Dr. Cynthia Breazeal's commercial Jibo home robot from MIT, which uses anthropomorphism using paralinguistic approaches.

Motion graphics or lighting animations are used to

communicate states of communication such as listening, thinking, speaking, or

waiting in less anthropomorphic uses of kinesics, such as the graphical user

interface elements on Apple's Siri or illumination arrays on Amazon Alexa's

physical interface Echo or in Xiami's Xiao AI.

The rising intelligence and anthropomorphism (or, in some

circumstances, zoomorphism or mechanomorphism) that comes with it might pose

some ethical issues about user experience.

The need for more anthropomorphic systems derives from the

positive user experience of humanlike agentic systems whose communicative

behaviors are more closely aligned with familiar interactions like

conversation, which are made feasible by natural language and paralinguistics.

Natural conversation systems have the fundamental advantage

of not requiring the user to learn new syntax or semantics in order to successfully

convey orders and wants.

These more humanistic human machine interfaces may employ a

user's familiar mental model of communication, which they gained through

interacting with other people.

Transparency and security become difficulties when a user's

judgments about a machine's behavior are influenced by human-to-human

communication as machine systems become closer to human-to-human contact.

The establishment of comfort and rapport may obscure the

differences between virtual assistant cognition and assumed motivation.

Many systems may be outfitted with motion sensors, proximity

sensors, cameras, tiny phones, and other devices that resemble, replicate, or

even surpass human capabilities in terms of cognition (the assistant's

intellect and perceptive capacity).

While these can be used to facilitate some humanlike

interaction by improving perception of the environment, they can also be used

to record, document, analyze, and share information that is opaque to a user

when their mental model and the machine's interface do not communicate the

machine's operation at a functional level.

After a user interaction, a digital assistant visual avatar

may shut his eyes or vanish, but there is no need to associate such behavior

with the microphone's and camera's capabilities to continue recording.

As digital assistants become more incorporated into human

users' daily lives, data privacy issues are becoming more prominent.

Transparency becomes a significant problem to solve when

specifications, manufacturer data collecting aims, and machine actions are

potentially mismatched with user expectations.

Finally, when it comes to data storage, personal

information, and sharing methods, security becomes a concern, as hacking,

disinformation, and other types of abuse threaten to undermine faith in

technology systems and organizations.

Find Jai on Twitter | LinkedIn | Instagram

You may also want to read more about Artificial Intelligence here.

See also:

Chatbots and Loebner Prize; Mobile Recommendation Assistants; Natural Language Processing and Speech Understanding.

References & Further Reading:

Lee, Gary G., Hong Kook Kim, Minwoo Jeong, and Ji-Hwan Kim, eds. 2015. Natural Language Dialog Systems and Intelligent Assistants. Berlin: Springer.

Leviathan, Yaniv, and Yossi Matias. 2018. “Google Duplex: An AI System for Accomplishing Real-world Tasks Over the Phone.” Google AI Blog. https://ai.googleblog.com/2018/05/duplex-ai-system-for-natural-conversation.html.

Viken, Alexander. 2009. “The History of Personal Digital Assistants, 1980–2000.” Agile Mobility, April 10, 2009.

Waddell, Kaveh. 2016. “The Privacy Problem with Digital Assistants.” The Atlantic, May 24, 2016. https://www.theatlantic.com/technology/archive/2016/05/the-privacy-problem-with-digital-assistants/483950/.