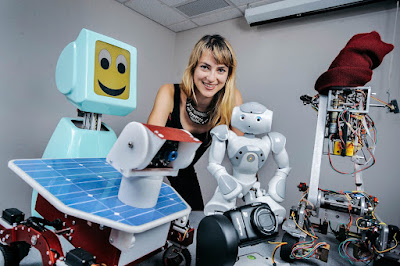

Heather Knight is a robotics and artificial intelligence specialist best recognized for her work in the entertainment industry.

Her Collaborative Humans and Robots: Interaction, Sociability, Machine Learning, and Art (CHARISMA) Research Lab at Oregon State University aims to apply performing arts techniques to robots.

Knight identifies herself as a social roboticist, a person who develops non-anthropomorphic—and sometimes nonverbal—machines that interact with people.

She makes robots that act in ways that are modeled after human interpersonal communication.

These behaviors include speaking styles, greeting movements, open attitudes, and a variety of other context indicators that assist humans in establishing rapport with robots in ordinary life.

Knight examines social and political policies relating to robotics in the CHARISMA Lab, where he works with social robots and so-called charismatic machines.

The Marilyn Monrobot interactive robot theatre company was founded by Knight.

The Robot Film Festival provides a venue for roboticists to demonstrate their latest inventions in a live setting, as well as films that are relevant to the evolving state of the art in robotics and robot-human interaction.

The Marilyn Monrobot firm arose from Knight's involvement with the Syyn Labs creative collective and her observations of Guy Hoffman, Director of the MIT Media Innovation Lab, on robots built for performance reasons.

Knight's production firm specializes on robot humor.

Knight claims that theatrical spaces are ideal for social robotics research because they not only encourage playfulness—requiring robot actors to express themselves and interact—but also include creative constraints that robots thrive in, such as a fixed stage, trial-and-error learning, and repeat performances (with manipu lated variations).

The usage of robots in entertainment situations, according to Knight, is beneficial since it increases human culture, imagination, and creativity.

At the TEDWomen conference in 2010, Knight debuted Data, a stand-up comedy robot.

Aldebaran Robotics created Data, an Nao robot (now SoftBank Group).

Data performs a comedy performance (with roughly 200 pre-programmed jokes) while gathering input from the audience and fine-tuning its act in real time.

The robot was created at Carnegie Mellon University by Scott Satkin and Varun Ramakrisha.

Knight is presently collaborating with Ginger the Robot on a comedic project.

The development of algorithms for artificial social intelligence is also fueled by robot entertainment.

In other words, art is utilized to motivate the development of new technologies.

To evaluate audience responses and understand the noises made by audiences, Data and Ginger use a microphone and a machine learning system (laughter, chatter, clap ping, etc.).

After each joke, the audience is given green and red cards to hold up.

Green cards indicate to the robots that the audience enjoys the joke.

Red cards are given out when jokes fall flat.

Knight has discovered that excellent robot humor doesn't have to disguise the fact that it's about a robot.

Rather, Data makes people laugh by drawing attention to its machine-specific issues and making self-deprecating remarks about its limits.

In order to create expressive, captivating robots, Knight has found improvisational acting and dancing skills to be quite useful.

In the process, she has changed the original Robotic Paradigm's technique of Sense-Plan-Act, preferring Sensing-Character-Enactment, which is more similar to the procedure utilized in theatrical performance in practice.

Knight is presently experimenting with ChairBots, which are hybrid robots made by gluing IKEA wooden chairs to Neato Botvacs (a brand of intelligent robotic vacuum cleaner).

The ChairBots are being tested in public places to see how a basic robot might persuade people to get out of the way using just rudimentary gestures as a mode of communication.

They've also been used to persuade prospective café customers to come in, locate a seat, and settle down.

Knight collaborated on the synthetic organic robot art piece Public Anemone for the SIGGRAPH computer graphics conference while pursuing degrees at the MIT Media Lab with Personal Robots group head Professor Cynthia Breazeal.

The installation consisted of a fiberglass cave filled with glowing creatures that moved and responded to music and people.

The cave's centerpiece robot, also known as "Public Anemone," swayed and interacted with visitors, bathed in a waterfall, watered a plant, and interacted with other cave attractions.

Knight collaborated with animatronics designer Dan Stiehl to create capacitive sensor-equipped artificial tube worms.

The tubeworm's fiberoptic tentacles drew into their tubes and changed color when a human observer reached into the cave, as though prompted by protective impulses.

The team behind Public Anemone defined the initiative as "a step toward fully embodied robot theatrical performance" and "an example of intelligent staging." Knight also helped with the mechanical design of the Smithsonian/Cooper-Hewitt Design Museum's "Cyberflora" kinetic robot flower garden display in 2003.

Her master's thesis at MIT focused on the Sensate Bear, a huggable robot teddy bear with full-body capacitive touch sensors that she used to investigate real-time algorithms incorporating social touch and nonverbal communication.

In 2016, Knight received her PhD from Carnegie Mellon University.

Her dissertation focused on expressive motion in robots with a reduced degree of freedom.

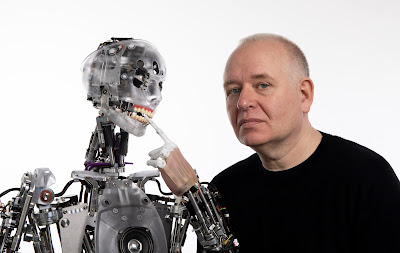

Humans do not require robots to closely resemble humans in appearance or behavior to be treated as close associates, according to Knight's research.

Humans, on the other hand, are quick to anthropomorphize robots and offer them autonomy.

Indeed, she claims, when robots become more human-like in appearance, people may feel uneasy or anticipate a far higher level of humanlike conduct.

Professor Matt Mason of the School of Computer Science and Robotics Institute advised Knight.

She was formerly a robotic artist in residence at Alphabet's X, Google's parent company's research lab.

Knight has previously worked with Aldebaran Robotics and NASA's Jet Propulsion Laboratory as a research scientist and engineer.

While working as an engineer at Aldebaran Robotics, Knight created the touch sensing panel for the Nao autonomous family companion robot, as well as the infrared detection and emission capabilities in its eyes.

Syyn Labs won a UK Music Video Award for her work on the opening two minutes of the OK Go video "This Too Shall Pass," which contains a Rube Goldberg machine.

She is now assisting Clearpath Robotics in making its self-driving, mobile-transport robots more socially conscious.

~ Jai Krishna Ponnappan

You may also want to read more about Artificial Intelligence here.

See also:

RoboThespian; Turkle, Sherry.

Further Reading:

Biever, Celeste. 2010. “Wherefore Art Thou, Robot?” New Scientist 208, no. 2792: 50–52.

Breazeal, Cynthia, Andrew Brooks, Jesse Gray, Matt Hancher, Cory Kidd, John McBean, Dan Stiehl, and Joshua Strickon. 2003. “Interactive Robot Theatre.” Communications of the ACM 46, no. 7: 76–84.

Knight, Heather. 2013. “Social Robots: Our Charismatic Friends in an Automated Future.” Wired UK, April 2, 2013. https://www.wired.co.uk/article/the-inventor.

Knight, Heather. 2014. How Humans Respond to Robots: Building Public Policy through Good Design. Washington, DC: Brookings Institute, Center for Technology Innovation.