In order to eliminate prejudice in artificial intelligence, it will be necessary to address both human and systemic biases.

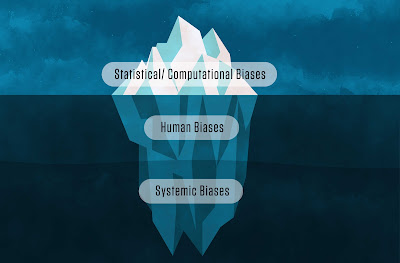

Bias in AI systems is often seen as a technological issue, but the NIST study recognizes that human prejudices, as well as systemic, institutional biases, have a role.

Researchers at the National Institute of Standards and Technology (NIST) recommend broadening the scope of where we look for the source of these biases — beyond the machine learning processes and data used to train AI software to the broader societal factors that influence how technology is developed — as a step toward improving our ability to identify and manage the harmful effects of bias in artificial intelligence (AI) systems.

The advice is at the heart of a new NIST article, Towards a Standard for Identifying and Managing Bias in Artificial Intelligence (NIST Special Publication 1270), which incorporates feedback from the public on a draft version issued last summer.

The publication provides guidelines related to the AI Risk Management Framework that NIST is creating as part of a wider effort to facilitate the development of trustworthy and responsible AI.

The key difference between the draft and final versions of the article, according to NIST's Reva Schwartz, is the increased focus on how bias presents itself not just in AI algorithms and the data used to train them, but also in the sociocultural environment in which AI systems are employed.

"Context is crucial," said Schwartz, one of the report's authors and the primary investigator for AI bias.

"AI systems don't work in a vacuum. They assist individuals in making choices that have a direct impact on the lives of others. If we want to design trustworthy AI systems, we must take into account all of the elements that might undermine public confidence in AI. Many of these variables extend beyond the technology itself to its consequences, as shown by the responses we got from a diverse group of individuals and organizations."

NIST contributes to the research, standards, and data needed to fulfill artificial intelligence's (AI) full potential as a driver of American innovation across industries and sectors.

NIST is working with the AI community to define the technological prerequisites for cultivating confidence in AI systems that are accurate and dependable, safe and secure, explainable, and bias-free.

AI bias is harmful to humans.

AI may make choices on whether or not a student is admitted to a school, approved for a bank loan, or accepted as a rental applicant.

Machine learning software, for example, might be taught on a dataset that underrepresents a certain gender or ethnic group.

While these computational and statistical causes of bias remain relevant, the new NIST article emphasizes that they do not capture the whole story.

Human and structural prejudices, which play a large role in the new edition, must be taken into consideration for a more thorough understanding of bias.

Institutions that operate in ways that disfavor specific social groups, such as discriminating against persons based on race, are examples of systemic biases.

Human biases may be related to how individuals utilize data to fill in gaps, such as a person's neighborhood impacting how likely police would consider them to be a criminal suspect.

When human, institutional, and computational biases come together, they may create a dangerous cocktail – particularly when there is no specific direction for dealing with the hazards of deploying AI systems.

"If we are to construct trustworthy AI systems, we must take into account all of the elements that might erode public faith in AI."

Many of these considerations extend beyond the technology itself to the technology's consequences." —Reva Schwartz, AI bias main investigator To address these concerns, the NIST authors propose a "socio-technical" approach to AI bias mitigation.

This approach recognizes that AI acts in a wider social context — and that attempts to overcome the issue of bias just on a technological level would fall short.

"When it comes to AI bias concerns, organizations sometimes gravitate to highly technical solutions," Schwartz added.

"However, these techniques fall short of capturing the social effect of AI systems. The growth of artificial intelligence into many facets of public life necessitates broadening our perspective to include AI as part of the wider social system in which it functions."

According to Schwartz, socio-technical approaches to AI are a developing field, and creating measuring tools that take these elements into account would need a diverse mix of disciplines and stakeholders.

"It's critical to bring in specialists from a variety of sectors, not just engineering," she added, "and to listen to other organizations and communities about the implications of AI."

Over the next several months, NIST will host a series of public workshops aimed at creating a technical study on AI bias and integrating it to the AI Risk Management Framework.

Visit the AI RMF workshop website for further information and to register.

A Method for Reducing Artificial Intelligence Bias Risk.

The National Institute of Standards and Technology (NIST) is advancing an approach for identifying and managing biases in artificial intelligence (AI) — and is asking for the public's help in improving it — in an effort to combat the often pernicious effect of biases in AI that can harm people's lives and public trust in AI.

A Proposal for Identifying and Managing Bias in Artificial Intelligence (NIST Special Document 1270), a new publication from NIST, lays out the methodology.

NIST will welcome public comments on the paper through September 10, 2021 (an extension of the initial deadline of August 5, 2021), and the writers will utilize the feedback to help define the topic of numerous collaborative virtual events NIST will organize in the following months.

This series of events aims to engage the stakeholder community and provide them the opportunity to contribute feedback and ideas on how to reduce the danger of bias in AI.

"Managing the danger of bias in AI is an important aspect of establishing trustworthy AI systems, but the route to accomplishing this remains uncertain," said Reva Schwartz of the National Institute of Standards and Technology, who was one of the report's authors.

"We intend to include the community in the development of voluntary, consensus-based norms for limiting AI bias and decreasing the likelihood of negative consequences."

NIST contributes to the research, standards, and data needed to fulfill artificial intelligence's (AI) full potential as a catalyst for American innovation across industries and sectors.

NIST is working with the AI community to define the technological prerequisites for cultivating confidence in AI systems that are accurate and dependable, safe and secure, explainable, and bias-free.

Bias in AI-based goods and systems is a critical, but yet poorly defined, component of trustworthiness.

This prejudice might be intentional or unintentional.

NIST is working to get us closer to consensus on recognizing and quantifying bias in AI systems by organizing conversations and conducting research.

Because AI can typically make sense of information faster and more reliably than humans, it has become a transformational technology.

Everything from medical detection to digital assistants on our cellphones now uses AI.

However, as AI's uses have developed, we've seen that its conclusions may be skewed by biases in the data it's given - data that either partially or erroneously represents the actual world.

Furthermore, some AI systems are designed to simulate complicated notions that cannot be readily assessed or recorded by data, such as "criminality" or "employment appropriateness."

Other criteria, such as where you live or how much education you have, are used as proxies for the notions these systems are attempting to mimic.

The strategy the authors suggest for controlling bias comprises a conscious effort to detect and manage bias at multiple phases in an AI system’s lifespan, from early idea through design to release.

The purpose is to bring together stakeholders from a variety of backgrounds, both within and outside the technology industry, in order to hear viewpoints that haven't been heard before.

“We want to bring together the community of AI developers of course, but we also want to incorporate psychologists, sociologists, legal experts and individuals from disadvantaged communities,” said NIST’s Elham Tabassi, a member of the National AI Research Resource Task Force.

"We'd want to hear from individuals who are affected by AI, both those who design AI systems and those who aren't."

Preliminary research for the NIST writers includes a study of peer-reviewed publications, books, and popular news media, as well as industry reports and presentations.

It was discovered that bias may seep into AI systems at any level of development, frequently in different ways depending on the AI's goal and the social environment in which it is used.

"An AI tool is often built for one goal, but it is subsequently utilized in a variety of scenarios," Schwartz said.

"Many AI applications have also been inadequately evaluated, if at all, in the environment for which they were designed. All these elements might cause bias to go undetected.”

Because the team members acknowledge that they do not have all of the answers, Schwartz believes it is critical to get public comment, particularly from those who are not often involved in technical conversations.

"We'd want to hear from individuals who are affected by AI, both those who design AI systems and those who aren't." ~ Elham Tabassi.

"We know bias exists throughout the AI lifespan," added Schwartz.

"It would be risky to not know where your model is biased or to assume that there is none. The next stage is to figure out how to see it and deal with it."

Comments on the proposed method may be provided by downloading and completing the template form (in Excel format) and emailing it to ai-bias@list.nist.gov by Sept. 10, 2021 (extended from the initial deadline of Aug. 5, 2021).

This website will be updated with further information on the joint event series.

Find Jai on Twitter | LinkedIn | Instagram

You may also want to read and learn more Technology and Engineering here.

You may also want to read and learn more Artificial Intelligence here.