The Terminator, which was released in 1984 and grossed

nearly $40 million at the domestic box office and untold sums in the ancillary

market while also spawning a multi-film franchise that continues to this day,

is one of the most well-known representations of artificial intelligence in

popular culture.

Despite the fact that the majority of the film takes place

in 1984, it shows a future in which Skynet, a military-designed artificial

intelligence system, becomes self-aware and wage war on mankind.

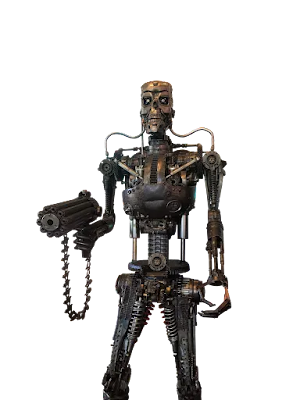

Shots from the future show roaming robot destroyers seeking

humans on a battlefield littered with mechanical wreckage and human bones, who

seem to be on the verge of extinction.

The majority of the movie is on a T-800 terminator (Arnold Schwarzenegger)

who is sent back to 1984 to murder Sarah Connor (Linda Hamilton) before she

gives birth to John Connor, humanity's future savior.

The fundamental story element of The Terminator dramatizes

what is likely the most prominent artificial intelligence myth in popular

culture: depicting intelligent machines as inherently dangerous beings capable

of rebelling against mankind in pursuit of their own agenda.

To fully comprehend the relevance of The Terminator, further information regarding the story as well as the character of the terminator must be provided.

Earth is in the middle of a conflict between humans and

Skynet-created robots in the year 2029, after a nuclear catastrophe.

Despite the fact that more information about Sky net is

disclosed in later installments of the series, its functionality remains a

mystery in the first film.

On the verge of defeat, John Connor (who is barely mentioned

in passing in the movie) leads a human resistance force that eventually

overcomes the robots.

The machines build a time-travel device to send one of their

terminator units back in time to assassinate Sarah Connor before she conceives

her savior son in order to foil the Connor-led revolt.

To thwart the robots' plot, the human resistance sends back

their own operative, Kyle Reese (Michael Biehn).

Reese is supposed to protect Sarah Connor while the

terminator is supposed to murder her.

As a result, the rest of the film, set in 1984, resembles a

cat and mouse pursuit in which the terminator tracks down Connor and Reese only

to escape by the skin of their teeth.

The Terminator is scorched to its mechanical endoskeleton in

the film's climax, and it follows Connor and Reese into a factory.

Reese conducts a self-sacrifice by putting a homemade pipe

bomb in the terminator's belly, killing him and severing the terminator in two.

Connor is pursued by the terminator's torso, which she is

able to smash using a hydraulic press.

The video then cuts to a pregnant Sarah Connor travelling

across Mexico some months later.

Reese is revealed to be John Connor's father in this scene.

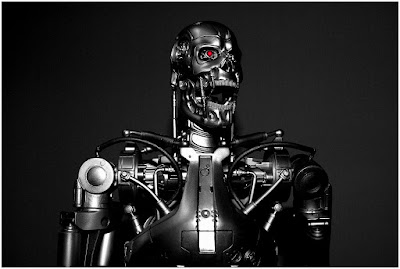

The Terminator is a wonderful example of artificial

intelligence.

It can walk, speak, sense, and act like a human person,

while being a programmed murdering machine.

It has been shown that it can absorb interactional subtlety

and change its behavior depending on previous experiences and interactions.

In a phone conversation, the terminator can also simulate

the voice of Sarah Connor's mother, which convinces Sarah to divulge her

whereabouts to the terminator.

The terminator can unquestionably pass the Turing Test in

these areas (a test wherein a confederate is unable to determine if they are

communicating with a human or a robot).

The terminator, on the other hand, is devoid of human awareness and is guided by mechanical logic as it completes a task.

The terminator was shot, ran over by a Mac truck, and burnt

to its endoskeleton, among other traumas, thus it's safe to assume it doesn't

perceive pain like humans do.

Popular culture's pessimistic depictions of artificial

intelligence, such as The Terminator, give images of the future to be avoided.

President Ronald Reagan's Strategic Defense Initiative (later derisively nicknamed the Star Wars Initiative by Senator Ted Kennedy) unveiled in 1983 ratcheted up tensions with the Soviet Union at the time of the film's development.

In a nutshell, the Strategic Defensive Initiative was a

planned missile defense system that would protect the nation against ballistic

nuclear weapons assaults.

It was believed that Reagan's bluster might spark a nuclear

weapons race.

As a result, The Terminator may be viewed as a criticism of

Ronald Reagan's Cold War strategy in that it offers a look into a possible

post-apocalyptic future wrought by nuclear catastrophe and the development of

ever powerful weapons.

In summary, the film expresses concerns about human

creation's destructive potential and the possibility for humans' own inventions

to turn against them.

Find Jai on Twitter | LinkedIn | Instagram

You may also want to read more about Artificial Intelligence here.

See also:

Berserkers; de Garis, Hugo; Technological Singularity.

References And Further Reading

Brammer, Rebekah. 2018. “Welcome to the Machine: Artificial Intelligence on Screen.” Screen Education 90 (September): 38–45.

Brown, Richard, and Kevin S. Decker, eds. 2009. Terminator and Philosophy: I’ll Be Back, Therefore I Am. Hoboken, NJ: John Wiley and Sons.

Gramantieri, Riccardo. 2018. “Artificial Monsters: From Cyborg to Artificial Intelligence.” In Monsters of Film, Fiction, and Fable: The Cultural Links between the Human and Inhuman, edited by Lisa Wegner Bro, Crystal O’Leary-Davidson, and Mary Ann Gareis, 287–313. Newcastle upon Tyne, UK: Cambridge Scholars Publishing.

Jancovich, Mark. 1992. “Modernity and Subjectivity in The Terminator: The Machine as Monster in Contemporary American Culture.” Velvet Light Trap 30 (Fall): 3–17.