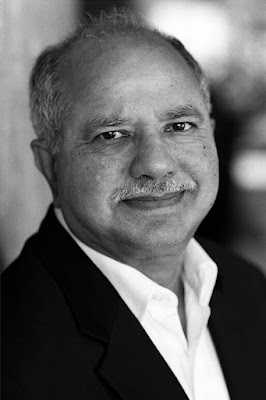

Dabbala Rajagopal "Raj" Reddy (1937–) is an Indian American who has made important contributions to artificial intelligence and has won the Turing Award.

He holds the Moza Bint Nasser Chair and University Professor

of Computer Science and Robotics at Carnegie Mellon University's School of

Computer Science.

He worked on the faculties of Stanford and Carnegie Mellon

universities, two of the world's leading colleges for artificial intelligence

research.

In the United States and in India, he has received honors

for his contributions to artificial intelligence.

In 2001, the Indian government bestowed upon him the Padma

Bhushan Award (the third highest civilian honor).

In 1984, he was also given the Legion of Honor, France's

highest honor, which was created in 1802 by Napoleon Bonaparte himself.

In 1958, Reddy obtained his bachelor's degree from the

University of Madras' Guindy Engineering College, and in 1960, he received his

master's degree from the University of New South Wales in Australia.

In 1966, he came to the United States to get his doctorate

in computer science at Stanford University.

He was the first in his family to get a university degree,

which is typical of many rural Indian households.

He went to the academy in 1966 and joined the faculty of

Stanford University as an Assistant Professor of Computer Science, where he

stayed until 1969, after working in the industry as an Applied Science

Representative at IBM Australia from 1960 to 1963.

He began working at Carnegie Mellon as an Associate

Professor of Computer Science in 1969 and will continue to do so until 2020.

He rose up the ranks at Carnegie Mellon, eventually becoming

a full professor in 1973 and a university professor in 1984.

In 1991, he was appointed as the head of the School of

Computer Science, a post he held until 1999.

Many schools and institutions were founded as a result of

Reddy's efforts.

In 1979, he launched the Robotics Institute and served as

its first director, a position he held until 1999.

He was a driving force behind the establishment of the

Language Technologies Institute, the Human Computer Interaction Institute, the

Center for Automated Learning and Discovery (now the Machine Learning

Department), and the Institute for Software Research at CMU during his stint as

dean.

From 1999 to 2001, Reddy was a cochair of the President's

Information Technology Advisory Committee (PITAC).

The President's Council of Advisors on Science and

Technology (PCAST) took over PITAC in 2005.

Reddy was the president of the American Association for

Artificial Intelligence (AAAI) from 1987 to 1989.

The AAAI has been renamed the Association for the

Advancement of Artificial Intelligence, recognizing the worldwide character of

the research community, which began with pioneers like Reddy.

The former logo, acronym (AAAI), and purpose have been

retained.

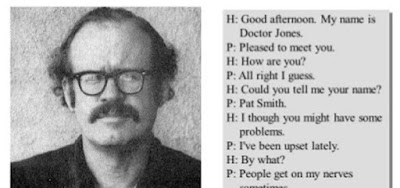

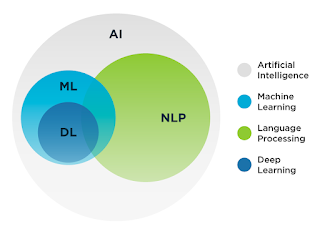

Artificial intelligence, or the study of giving intelligence

to computers, was the subject of Reddy's research.

He worked on voice control for robots, speech recognition

without relying on the speaker, and unlimited vocabulary dictation, which

allowed for continuous speech dictation.

Reddy and his collaborators have made significant

contributions to computer analysis of natural sceneries, job oriented computer

architectures, universal access to information (a project supported by UNESCO),

and autonomous robotic systems.

Reddy collaborated on Hearsay II, Dragon, Harpy, and Sphinx

I/II with his coworkers.

The blackboard model, one of the fundamental concepts that

sprang from this study, has been extensively implemented in many fields of AI.

Reddy was also interested in employing technology for the

sake of society, and he worked as the Chief Scientist at the Centre Mondial

Informatique et Ressource Humaine in France.

He aided the Indian government in the establishment of the

Rajiv Gandhi University of Knowledge Technologies, which focuses on low-income

rural youth.

He is a member of the International Institute of Information

Technology (IIIT) in Hyderabad's governing council.

IIIT is a non-profit public-private partnership (N-PPP) that

focuses on technological research and applied research.

He was on the board of directors of the Emergency Management

and Research Institute, a nonprofit public-private partnership that offers

public emergency medical services.

EMRI has also aided in the emergency management of its

neighboring nation, Sri Lanka.

In addition, he was a member of the Health Care Management

Research Institute (HMRI).

HMRI provides non-emergency health-care consultation to

rural populations, particularly in Andhra Pradesh, India.

In 1994, Reddy and Edward A. Feigenbaum shared the Turing Award, the top honor in artificial intelligence, and Reddy became the first person of Indian/Asian descent to receive the award.

In 1991, he received the IBM Research Ralph Gomory Fellow

Award, the Okawa Foundation's Okawa Prize in 2004, the Honda Foundation's Honda

Prize in 2005, and the Vannevar Bush Award from the United States National

Science Board in 2006.

Reddy has received fellowships from the Institute of

Electronic and Electrical Engineers (IEEE), the Acoustical Society of America,

and the American Association for Artificial Intelligence, among other

prestigious organizations.

Find Jai on Twitter | LinkedIn | Instagram

You may also want to read more about Artificial Intelligence here.

See also:

Autonomous and Semiautonomous Systems; Natural Language Processing and Speech Understanding.

References & Further Reading:

Reddy, Raj. 1988. “Foundations and Grand Challenges of Artificial Intelligence.” AI Magazine 9, no. 4 (Winter): 9–21.

Reddy, Raj. 1996. “To Dream the Possible Dream.” Communications of the ACM 39, no. 5 (May): 105–12.