Knowledge engineering (KE) is an artificial intelligence

subject that aims to incorporate expert knowledge into a formal automated

programming system in such a manner that the latter can produce the same or

comparable results in problem solving as human experts when working with the

same data set.

Knowledge engineering, more precisely, is a discipline that

develops methodologies for constructing large knowledge-based systems (KBS),

also known as expert systems, using appropriate methods, models, tools, and

languages.

For knowledge elicitation, modern knowledge engineering uses

the knowledge acquisition and documentation structuring (KADS) approach; hence,

the development of knowledge-based systems is considered a modeling effort

(i.e., knowledge engineer ing builds up computer models).

It's challenging to codify the knowledge acquisition process

since human specialists' knowledge is a combination of skills, experience, and

formal knowledge.

As a result, rather than directly transferring knowledge

from human experts to the programming system, the experts' knowledge is

modeled.

Simultaneously, direct simulation of the entire cognitive

process of experts is extremely difficult.

Designed computer models are expected to achieve targets

similar to experts’ results doing problem solving in the domain rather than

matching the cognitive capabilities of the experts.

As a result, knowledge engineering focuses on modeling and

problem-solving methods (PSM) that are independent of various representation

formalisms (production rules, frames, etc.).

The problem solving method is a key component of knowledge

engineering, and it refers to the knowledge-level specification of a reasoning

pattern that can be used to complete a knowledge-intensive task.

Each problem-solving technique is a pattern that offers

template structures for addressing a specific issue.

The terms "diagnostic,"

"classification," and "configuration" are often used to

categorize problem-solving strategies based on their topology.

PSM "Cover-and-Differentiate" for diagnostic tasks

and PSM "Propose-and-Reverse" for parametric design tasks are two examples.

Any problem-solving approach is predicated on the notion

that the suggested method's logical adequacy corresponds to the computational

tractability of the system implementation based on it.

The PSM heuristic classification—an inference pattern that

defines the behavior of knowledge-based systems in terms of objectives and

knowledge required to attain these goals—is often used in early instances of

expert systems.

Inference actions and knowledge roles, as well as their

relationships, are covered by this problem-solving strategy.

The relationships specify how domain knowledge is used in

each interference action.

Observables, abstract observables, solution abstractions,

and solution are the knowledge roles, while the interference action might be

abstract, heuristic match, or refine.

The PSM heuristic classification requires a hierarchically

organized model of observables as well as answers for "abstract" and

"refine," making it suited for static domain knowledge acquisition.

In the late 1980s, knowledge engineering modeling

methodologies shifted toward role limiting methods (RLM) and generic tasks

(GT).

The idea of the "knowledge role" is utilized in

role-limiting methods to specify how specific domain knowledge is employed in

the problem-solving process.

RLM creates a wrapper over PSM by explaining it in broad

terms with the purpose of reusing it.

However, since this technique only covers a single instance

of PSM, it is ineffective for issues that need the employment of several

methods.

Configurable role limiting methods (CRLM) are an extension

of the role limiting methods concept, offering a predetermined collection of

RLMs as well as a fixed scheme of knowledge categories.

Each member method may be used on a distinct subset of a

job, but introducing a new method is challenging since it necessitates changes

to established knowledge categories.

The generic task method includes a predefined scheme of

knowledge kinds and an inference mechanism, as well as a general description of

input and output.

The generic task is based on the "strong interaction

problem hypothesis," which claims that domain knowledge's structure and

representation may be totally defined by its application.

Each generic job makes use of information and employs

control mechanisms tailored to that knowledge.

Because the control techniques are more domain-specific, the

actual knowledge acquisition employed in GT is more precise in terms of problem-solving

step descriptions.

As a result, the design of specialized knowledge-based

systems may be thought of as the instantiation of specified knowledge

categories using domain-specific words.

The downside of GT is that it may not be possible to integrate

a specified problem-solving approach with the optimum problem-solving strategy

required to complete the assignment.

The task structure (TS) approach seeks to address GT's

shortcomings by distinguishing between the job and the technique employed to

complete it.

As a result, every task-structure based on that method

postulates how the issue might be solved using a collection of generic tasks,

as well as what knowledge has to be acquired or produced for these tasks.

Because of the requirement for several models, modeling

frameworks were created to meet various parts of knowledge engineering

methodologies.

The organizational model, task model, agent model,

communication model, expertise model, and design model are the models of the

most common engineering CommonKADS structure (which depends on KADS).

The organizational model explains the structure as well as

the tasks that each unit performs.

The task model describes tasks in a hierarchical order.

Each agent's skills in task execution are specified by the

agent model.

The communication model specifies how agents interact with

one another.

The expertise model, which employs numerous layers and

focuses on representing domain-specific knowledge (domain layer) as well as

inference for the reasoning process, is the most significant model (inference

layer).

A task layer is also supported by the expertise model.

The latter is concerned with task decomposition.

The system architecture and computational mechanisms used to

make the inference are described in the design model.

In CommonKADS, there is a clear distinction between

domain-specific knowledge and generic problem-solving techniques, allowing

various problems to be addressed by constructing a new instance of the domain

layer and utilizing the PSM on a different domain.

Several libraries of problem-solving algorithms are now

available for use in development.

They are distinguished by their key characteristics: if the

library was created for a specific goal or has a larger reach; whether the

library is formal, informal, or implemented; whether the library uses fine or

coarse grained PSM; and, lastly, the library's size.

Recently, some research has been carried out with the goal

of unifying existing libraries by offering adapters that convert task-neutral

PSM to task-specific PSM.

The MIKE (model-based and incremental knowledge engineering)

method, which proposes integrating semiformal and formal specification and

prototyping into the framework, grew out of the creation of CommonKADS.

As a result, MIKE divides the entire process of developing

knowledge-based systems into a number of sub-activities, each of which focuses

on a different aspect of system development.

The Protégé method makes use of PSMs and ontologies, with an

ontology being defined as an explicit statement of a common conceptualization

that holds in a certain situation.

Although the ontologies used in Protégé might be of any

form, the ones utilized are domain ontologies, which describe the common

conceptualization of a domain, and method ontologies, which specify the ideas

and relations used by problem solving techniques.

In addition to problem-solving techniques, the development

of knowledge-based systems necessitates the creation of particular languages

capable of defining the information needed by the system as well as the

reasoning process that will use that knowledge.

The purpose of such languages is to give a clear and formal

foundation for expressing knowledge models.

Furthermore, some of these formal languages may be

executable, allowing simulation of knowledge model behavior on specified input

data.

The knowledge was directly encoded in rule-based

implementation languages in the early years.

This resulted in a slew of issues, including the

impossibility to provide some forms of information, the difficulty to assure

consistent representation of various types of knowledge, and a lack of specifics.

Modern approaches to language development aim to target and

formalize the conceptual models of knowledge-based systems, allowing users to

precisely define the goals and process for obtaining models, as well as the

functionality of interface actions and accurate semantics of the various domain

knowledge elements.

The majority of these epistemological languages include

primitives like constants, functions, and predicates, as well as certain

mathematical operations.

Object-oriented or frame-based languages, for example,

define a wide range of modeling primitives such as objects and classes.

KARL, (ML)2, and DESIRE are the most common examples of

specific languages.

KARL is a language that employs a Horn logic variation.

It was created as part of the MIKE project and combines two

forms of logic to target the KADS expertise model: L-KARL and P-KARL.

The L-KARL is a frame logic version that may be used in

inference and domain layers.

It's a mix of first-order logic and semantic data modeling

primitives, in fact.

P-KARL is a task layer specification language that is also a

dynamic logic in some versions.

For KADS expertise models, (ML)2 is a formalization

language.

The language mixes first-order extended logic for domain

layer definition, first-order meta logic for inference layer specification, and

quantified dynamic logic for task layer specification.

The concept of compositional architecture is used in DESIRE

(the design and specification of interconnected reasoning components).

It specifies the dynamic reasoning process using temporal

logics.

Transactions describe the interaction between components in

knowl edge-based systems, and control flow between any two objects is specified

as a set of control rules.

A metadata description is attached to each item.

In a declarative approach, the meta level specifies the

dynamic features of the object level.

The need to design large knowledge-based systems prompted

the development of knowledge engineering, which entails creating a computer

model with the same problem-solving capabilities as human experts.

Knowledge engineering views knowledge-based systems as

operational systems that should display some desirable behavior, and provides

modeling methodologies, tools, and languages to construct such systems.

~ Jai Krishna Ponnappan

You may also want to read more about Artificial Intelligence here.

See also:

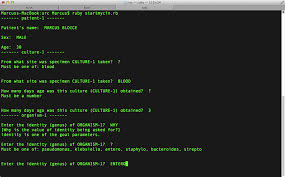

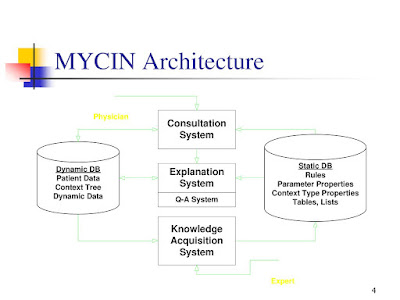

Clinical Decision Support Systems; Expert Systems; INTERNIST-I and QMR; MOLGEN; MYCIN.

Further Reading:

Schreiber, Guus. 2008. “Knowledge Engineering.” In Foundations of Artificial Intelligence, vol. 3, edited by Frank van Harmelen, Vladimir Lifschitz, and Bruce Porter, 929–46. Amsterdam: Elsevier.

Studer, Rudi, V. Richard Benjamins, and Dieter Fensel. 1998. “Knowledge Engineering: Principles and Methods.” Data & Knowledge Engineering 25, no. 1–2 (March): 161–97.

Studer, Rudi, Dieter Fensel, Stefan Decker, and V. Richard Benjamins. 1999. “Knowledge Engineering: Survey and Future Directions.” In XPS 99: German Conference on Knowledge-Based Systems, edited by Frank Puppe, 1–23. Berlin: Springer.