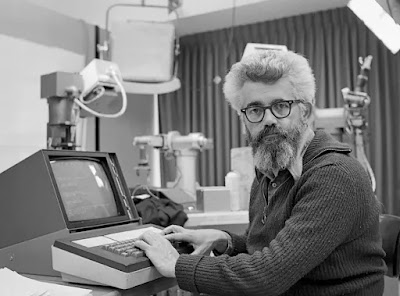

Rudolf von Bitter (German: Rudolf von Bitter) Rucker (1946–) is an American novelist, mathematician, and computer scientist who is the great-great-great-grandson of philosopher Georg Wilhelm Friedrich Hegel (1770–1831).

Rucker is most recognized for his sarcastic,

mathematics-heavy science fiction, while having written in a variety of

fictional and nonfictional genres.

His Ware tetralogy (1982–2000) is regarded as one of the

cyberpunk literary movement's fundamental works.

Rucker graduated from Rutgers University with a Ph.D. in mathematics in 1973.

He shifted from teaching mathematics in colleges in the US

and Germany to teaching computer science at San José State University, where he

ultimately became a professor before retiring in 2004.

Rucker has forty publications to his credit, including

science fiction novels, short story collections, and nonfiction works.

His nonfiction works span the disciplines of mathematics,

cognitive science, philosophy, and computer science, with topics such as the

fourth dimension and the meaning of computation among them.

The popular mathematics book Infinity and the Mind: The

Science and Philosophy of the Infinite (1982), which he wrote, is still in

print at Princeton University Press.

Rucker established himself in the cyberpunk genre with the

Ware series (Software 1982, Wetware 1988, Freeware 1997, and Realware 2000).

Since Dick's death in 1983, the famous American science fiction award has been handed out every year since Software received the inaugural Philip K. Dick Award.

Wetware was also awarded this prize in 1988, in a tie with Paul J. McAuley's Four Hundred Billion Stars.

The Ware Tetralogy, which Rucker has made accessible for

free online as an e-book under a Creative Commons license, was reprinted in

2010 as a single volume.

Cobb Anderson, a retired roboticist who has fallen from

favor for creating sentient robots with free agency, known as boppers, is the

protagonist of the Ware series.

The boppers want to reward him by giving him immortality via

mind uploading; unfortunately, this procedure requires the full annihilation of

Cobb's brain, which the boppers do not consider necessary hardware.

In Wetware, a bopper named Berenice wants to impregnate

Cobb's niece in order to produce a human-machine hybrid.

Humanity retaliates by unleashing a mold that kills boppers,

but this chipmould thrives on the cladding that covers the boppers' exteriors,

resulting in the creation of an organic-machine hybrid in the end.

Freeware is based on these lifeforms, which are now known as

moldies and are generally detested by biological people.

This story also includes extraterrestrial intelligences, who

in Realware provide superior technology and the power to change reality to

different types of human and artificial entities.

The book Postsingular, published in 2007, was the first of

Rucker's works to be distributed under a Creative Commons license.

The book, set in San Francisco, addresses the emergence of

nanotechnology, first in a dystopian and later in a utopian scenario.

In the first section, a rogue engineer creates nants, which

convert Earth into a virtual replica of itself, destroying the planet in the

process, until a youngster is able to reverse their programming.

The narrative then goes on to depict orphids, a new kind of

nanotechnology that allows people to become cognitively enhanced,

hyperintelligent creatures.

Although the Ware tetralogy and Postsingular have been

classified as cyberpunk books, Rucker's literature has been seen as difficult

to label, since it combines hard science with humor, graphic sex, and constant

drug use.

"Happily, Rucker himself has established a phrase to

capture his unusual mix of commonplace reality and outraeous fantasy:

transrealism," writes science fiction historian Rob Latham (Latham 2005,

4).

"Transrealism is not so much a form of SF as it is a sort of avant-garde literature," Rucker writes in "A Transrealist Manifesto," published in 1983. (Rucker 1983, 7).

"This means writing SF about yourself, your friends, and your local surroundings, transmuted in some science-fictional fashion," he noted in a 2002 interview. Using actual life as a basis lends your writing a literary quality and keeps you from using clichés" (Brunsdale 2002, 48).

Rucker worked on the short story collection Transreal

Cyberpunk with cyberpunk author Bruce Sterling, which was released in 2016.

Rucker chose to publish his book Nested Scrolls after

suffering a brain hemorrhage in 2008.

It won the Emperor Norton Award for "amazing innovation

and originality unconstrained by the constraints of petty reason" when it

was published in 2011.

Million Mile Road Trip (2019), a science fiction book about

a group of human and nonhuman characters on an intergalactic road trip, is his

most recent work.

Find Jai on Twitter | LinkedIn | Instagram

You may also want to read more about Artificial Intelligence here.

See also:

Digital Immortality; Nonhuman Rights and Personhood; Robot Ethic.

References & Further Reading:

Brunsdale, Mitzi. 2002. “PW talks with Rudy Rucker.” Publishers Weekly 249, no. 17 (April 29): 48. https://archive.publishersweekly.com/?a=d&d=BG20020429.1.82&srpos=1&e=-------en-20--1--txt-txIN%7ctxRV-%22PW+talks+with+Rudy+Rucker%22---------1.

Latham, Rob. 2005. “Long Live Gonzo: An Introduction to Rudy Rucker.” Journal of the Fantastic in the Arts 16, no. 1 (Spring): 3–5.

Rucker, Rudy. 1983. “A Transrealist Manifesto.” The Bulletin of the Science Fiction Writers of America 82 (Winter): 7–8.

Rucker, Rudy. 2007. “Postsingular.” https://manybooks.net/titles/ruckerrother07postsingular.html.

Rucker, Rudy. 2010. The Ware Tetralogy. Gaithersburg, MD: Prime Books, 2010.