Sherry Turkle(1948–) has a background in sociology and psychology, and her work focuses on the human-technology interaction.

While her study in the 1980s focused on how technology

affects people's thinking, her work in the 2000s has become more critical of

how technology is utilized at the expense of building and maintaining

meaningful interpersonal connections.

She has employed artificial intelligence in products like children's toys and pets for the elderly to highlight what people lose out on when interacting with such things.

Turkle has been at the vanguard of AI breakthroughs as a

professor at the Massachusetts Institute of Technology (MIT) and the creator of

the MIT Initiative on Technology and the Self.

She highlights a conceptual change in the understanding of

AI that occurs between the 1960s and 1980s in Life on the Screen: Identity inthe Age of the Internet (1995), substantially changing the way humans connect

to and interact with AI.

She claims that early AI paradigms depended on extensive preprogramming and employed a rule-based concept of intelligence.

However, this viewpoint has given place to one that

considers intelligence to be emergent.

This emergent paradigm, which became the recognized

mainstream view by 1990, claims that AI arises from a much simpler set of

learning algorithms.

The emergent method, according to Turkle, aims to emulate

the way the human brain functions, assisting in the breaking down of barriers

between computers and nature, and more generally between the natural and the

artificial.

In summary, an emergent approach to AI allows people to

connect to the technology more easily, even thinking of AI-based programs and

gadgets as children.

Not just for the area of AI, but also for Turkle's study and writing on the subject, the rising acceptance of the emerging paradigm of AI and the enhanced relatability it heralds represents a significant turning point.

Turkle started to employ ethnographic research techniques to

study the relationship between humans and their gadgets in two edited

collections, Evocative Objects: Things We Think With (2007) and The Inner

History of Devices (2008).

She emphasized in her book The Inner History of Devices that

her intimate ethnography, or the ability to "listen with a third

ear," is required to go past the advertising-based clichés that are often

employed when addressing technology.

This method comprises setting up time for silent meditation

so that participants may think thoroughly about their interactions with their

equipment.

Turkle used similar intimate ethnographic approaches in her second major book, Alone Together:

Why We Expect More from Technology and Less

from Each Other (2011), to argue that the increasing connection between people

and the technology they use is harmful.

These issues are connected to the increased usage of social media as a form of communication, as well as the continuous degree of

familiarity and relatability with technology gadgets, which stems from the

emerging AI paradigm that has become practically omnipresent.

She traced the origins of the dilemma back to early pioneers in the field of cybernetics, citing, for example, Norbert Weiner's speculations on the idea of transmitting a human person across a telegraph line in his book God & Golem, Inc.(1964).

Because it reduces both people and technology to

information, this approach to cybernetic thinking blurs the barriers between

them.

In terms of AI, this implies that it doesn't matter whether the machines with which we interact are really intelligent.

Turkle claims that by engaging with and caring for these

technologies, we may deceive ourselves into feeling we are in a relationship,

causing us to treat them as if they were sentient.

In a 2006 presentation titled "Artificial Intelligence

at 50: From Building Intelligence to Nurturing Sociabilities" at the

Dartmouth Artificial Intelligence Conference, she recognized this trend.

She identified the 1997 Tamagotchi, 1998 Furby, and 2000 MyReal Baby as early versions of what she refers to as relational artifacts,

which are more broadly referred to as social machines in the literature.

The main difference between these devices and previous

children's toys is that these devices come pre-animated and ready for a

relationship, whereas previous children's toys required children to project a

relationship onto them.

Turkle argues that this change is about our human weaknesses

as much as it is about computer capabilities.

In other words, just caring for an item increases the

likelihood of not only seeing it as intelligent but also feeling a connection

to it.

This sense of connection is more relevant to the typical

person engaging with these technologies than abstract philosophic

considerations concerning the nature of their intelligence.

Turkle delves more into the ramifications of people engaging with AI-based technologies in both Alone Together and Reclaiming Conversation: The Power of Talk in a Digital Age (2015).

She provides the example of Adam in Alone Together, who

appreciates the appreciation of the AI bots he controls over in the game

Civilization.

Adam appreciates the fact that he is able to create

something fresh when playing.

Turkle, on the other hand, is skeptical of this interaction,

stating that Adam's playing isn't actual creation, but rather the sensation of

creation, and that it's problematic since it lacks meaningful pressure or

danger.

In Reclaiming Conversation, she expands on this point,

suggesting that social partners simply provide a perception of camaraderie.

This is important because of the value of human connection

and what may be lost in relationships that simply provide a sensation or

perception of friendship rather than true friendship.

Turkle believes that this transition is critical.

She claims that although connections with AI-enabledtechnologies may have certain advantages, they pale in contrast to what is missing:

- the complete complexity and inherent contradictions that define what it is to be human.

A person's connection with an AI-enabled technology is not as intricate as one's interaction with other individuals.

Turkle claims that as individuals have become more used to and dependent on technology gadgets, the definition of friendship has evolved.

- People's expectations for companionship have been simplified as a result of this transformation, and the advantages that one wants to obtain from partnerships have been reduced.

- People now tend to associate friendship only with the concept of interaction, ignoring the more nuanced sentiments and arguments that are typical in partnerships.

- By engaging with gadgets, one may form a relationship with them.

- Conversations between humans have become merely transactional as human communication has shifted away from face-to-face conversation and toward interaction mediated by devices.

In other words, the most that can be anticipated is

engagement.

Turkle, who has a background in psychoanalysis, claims that

this kind of transactional communication allows users to spend less time

learning to view the world through the eyes of another person, which is a

crucial ability for empathy.

Turkle argues we are in a robotic period in which people

yearn for, and in some circumstances prefer, AI-based robotic companionship

over that of other humans, drawing together these numerous streams of argument.

For example, some people enjoy conversing with their

iPhone's Siri virtual assistant because they aren't afraid of being judged by

it, as evidenced by a series of Siri commercials featuring celebrities talking

to their phones.

Turkle has a problem with this because these devices can

only respond as if they understand what is being said.

AI-based gadgets, on the other hand, are confined to comprehending the literal meanings of data stored on the device.

They can decipher the contents of phone calendars and

emails, but they have no idea what any of this data means to the user.

There is no discernible difference between a calendar

appointment for car maintenance and one for chemotherapy for an AI-based

device.

A person may lose sight of what it is to have an authentic

dialogue with another human when entangled in a variety of these robotic

connections with a growing number of technologies.

While Reclaiming Communication documents deteriorating conversation skills and decreasing empathy, it ultimately ends on a positive note.

Because people are becoming increasingly dissatisfied with

their relationships, there may be a chance for face-to-face human communication

to reclaim its vital role.

Turkle's ideas focus on reducing the amount of time people

spend on their phones, but AI's involvement in this interaction is equally

critical.

- Users must accept that their virtual assistant connections will never be able to replace face-to-face interactions.

- This will necessitate being more deliberate in how one uses devices, prioritizing in-person interactions over the faster and easier interactions provided by AI-enabled devices.

Find Jai on Twitter | LinkedIn | Instagram

You may also want to read more about Artificial Intelligence here.

See also:

Blade Runner; Chatbots and Loebner Prize; ELIZA; General and Narrow AI; Moral Turing Test; PARRY; Turing, Alan; 2001: A Space Odyssey.

References And Further Reading

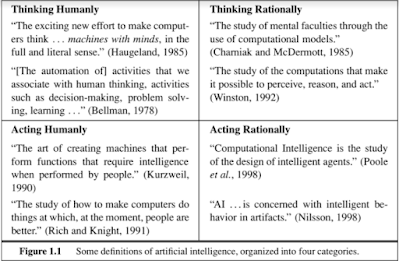

- Haugeland, John. 1997. “What Is Mind Design?” Mind Design II: Philosophy, Psychology, Artificial Intelligence, edited by John Haugeland, 1–28. Cambridge, MA: MIT Press.

- Searle, John R. 1997. “Minds, Brains, and Programs.” Mind Design II: Philosophy, Psychology, Artificial Intelligence, edited by John Haugeland, 183–204. Cambridge, MA: MIT Press.

- Turing, A. M. 1997. “Computing Machinery and Intelligence.” Mind Design II: Philosophy, Psychology, Artificial Intelligence, edited by John Haugeland, 29–56. Cambridge, MA: MIT Press.