What Is Robot Ethics?

Robot ethics is a

branch of technology ethics that studies, clarifies, and addresses the moral

possibilities and concerns that come from the design, development, and

deployment of robots and other autonomous systems.

"Robot ethics" is an umbrella phrase that

encompasses a number of similar but distinct projects and undertakings.

The earliest known articulation of a robot ethics may be

found in fiction, notably in Isaac Asimov's collection of robot tales, I, Robot

(1950).

Asimov presented the three rules of robotics in the short

story "Runaround," which initially published in the March 1942

edition of Astounding Science Fiction:

1. A robot may not damage a human being or enable a human being

to come to danger as a result of its inactivity.

2. Except when such directions contradict with the First Law, a

robot shall follow the orders issued to it by humans.

3. A robot must defend its own existence as long as doing so

does not violate the First or Second Laws.

(Asimov, 40, 1950) In his 1985 book Robots and Empire,

Asimov adds a fourth element to the sequence, which he refers to as the

"zeroth rule," to maintain the dominance of lower-numbered components

over higher-numbered ones.

The rules are both functionalist and anthropocentric by

design, outlining a series of layered limits on robot conduct in order to

protect human persons and communities' interests and well-being.

Despite this, many have attacked the legislation as weak and

impracticable for enforcing a true moral code.

The principles were created by Asimov to create captivating

science fiction tales, not to address real-world problems involving machine

action and robot behavior.

As a result, Asimov's rules were never meant to constitute a

full and final set of instructions for real robots.

He used the rules to create dramatic tension, imaginative

circumstances, and character struggle in his stories.

"Asimov's Three Laws of Robotics are literary

techniques, not technical concepts," writes Lee McCauley (2007, 160).

Asimov's rules have been discovered to be severely

inadequate for daily practice by theorists and practitioners in the domains of

robotics and computer ethics.

Susan Leigh Anderson tackles this problem front on, showing

not only that Asimov ignored his own principles as a basis for machine ethics,

but also that the laws are inadequate as a foundation for an ethical framework

or system (Anderson 2008, 487–93).

As a result, although academics and developers are

acquainted with the Three Rules of Robotics, they are also aware that the laws

are neither computable or implementable in any meaningful way.

Beyond Asimov's original science fiction invention, the

scientific literature has evolved various variations of robot ethics.

Robot ethics, roboethics, and robot rights are examples of

these.

Gianmarco Veruggio, a roboticist, coined the term

"roboethics" in 2002.

It was first addressed in public in 2004 at the First

International Symposium on Roboethics, and has since been expanded upon and

explained in a number of publications.

"Roboethics is an applied ethics whose goal is to build

scientific/cultural/technical instruments that may be shared by diverse social

groups and beliefs," according to Veruggio.

These technologies are intended to promote and support the

development of robotics for the benefit of human society and people, as well as

to assist in the prevention of its abuse against humanity" (Veruggio and

Operto 2008, 1504).

"Roboethics is neither the ethics of robots, nor any

artificial ethics," says one definition, "but it is the human ethics

of robots' inventors, makers, and users" (Veruggio and Operto 2008, 1504).

As a result, roboethics is often used to establish a

professional ethics for roboticists, and is therefore comparable to other

professional, applied ethics formulations such as bioethics or computer ethics.

The European Robotics Research Network (EURON) Roboethics

Roadmap, which aimed to develop an ethical framework for "the design,

manufacturing, and use of robots" (Veruggio 2006, 612) and the Foundation

for Responsible Robotics (FRR), which recognizes that because "robots are

tools without moral intelligence," their creators must "be

accountable for the ethical developments that must come with technological

innovation" (Veruggio 2006, 612). (FRR 2019).

There's also the issue of robot ethics. Robot ethics, according to Veruggio et al. (2011, 21), refers to the code of behavior that designers

adopt in robot artificial intelligence.

This entails a kind of artificial ethics capable of ensuring

that autonomous robots behave ethically in all scenarios where they interact

with humans or when their activities may have negative implications for humans

or the environment.

Robot ethics is concerned with the moral behavior of the

machine itself, as opposed to roboethics, which is concerned with the moral

conduct of the human creator, developer, or user.

Robot ethics is often confused with "machine

ethics," and Veruggio uses both terms interchangeably.

Machine ethics is concerned with the moral capabilities of

machines themselves, as opposed to computer ethics, which is concerned with the

moral behavior of the human inventor, developer, or user of the system

(Anderson and Anderson 2007, 15).

Under the title Moral Machines, Wendell Wallach and Colin

Allen have explored a similar line of thought.

"The area of machine morality extends the study of

computer ethics beyond concern about what humans do with their computers to

issues about what machines do by themselves," according to Wallach and

Allen (2009, 6).

Robot ethics, like computer ethics before it, believes

technology to be a more or less transparent tool or instrument of human moral

decision-making and behavior.

Patrick Lin et al. (2012 and 2017) attempted to bring all of these works

together under a broader definition of the word, describing it as an emerging

discipline of applied moral philosophy.

To present, the majority of robot ethics research has

focused on concerns of accountability, either as it pertains to human designers

of robotic systems or as it pertains to or is attributed to the robotic device

itself.

However, this is just one side of the story.

As Luciano Floridi and J. W. Sanders (2001, 349–50) correctly point out, ethics is about

social connections between two interacting components: the actor (or agent) and

the action receiver.

The majority of roboethics and robot ethics initiatives may

be classified as solely agent-oriented endeavors.

"Robot rights," a term coined by philosophers Mark

Coeckelbergh (2010) and David Gunkel (2018), as well as legal scholars Kate

Darling (2012) and Alain Bensoussan and Jérémy Bensoussan.

For these researchers, robot ethics

include not just the robot's moral behavior, but also the artifact's moral and

legal standing, as well as its place in our ethical and legal systems as a

potential subject rather than merely an object.

The European Parliament has put this notion to the test,

proposing a new legal category of electronic person to cope with the societal

integration of increasingly autonomous robotics and AI systems.

In conclusion, the phrase "robot ethics"

encompasses a wide range of initiatives relating to robots and their societal

influence and repercussions.

In its more specialized form, roboethics refers to a field

of applied or professional ethics concerned with moral dilemmas connected to

the design, development, and implementation of robots and other autonomous

technologies.

In a broader sense, robot ethics refers to a branch of moral

philosophy concerned with the moral and legal implications of robots acting as

both agents and patients.

Robot ethics is a rising multidisciplinary study endeavor that aims to understand the ethical implications and repercussions of robotic technology, particularly autonomous robots.

It is generally located at the crossroads of applied ethics and robotics.

Researchers, thinkers, and academics from fields as varied as robotics, computer science, psychology, law, philosophy, and others are tackling the difficult ethical issues surrounding the development and deployment of robotic technology in society.

Many fields of robotics are touched, particularly those that include robots interacting with people, such as elder care and medical robotics, as well as robots for different search and rescue tasks, including military robots, and other types of service and entertainment robots.

While military robots were initially at the forefront of the debate (e.g., whether and when autonomous robots should be allowed to use lethal force, whether they should be allowed to make those decisions autonomously, etc. ), the impact of other types of robots, particularly social robots, has grown in importance in recent years.

The IEEE-RAS Technical Committee on Robot Ethics' goal is to offer a platform for the IEEE-RAS to raise and solve the pressing ethical issues raised by and linked with robotics research and technology.

The TC (now in its third generation) has been organizing various types of meetings (from satellite workshops at main conferences to standalone venues) to draw attention to the increasingly urgent ethical issues raised by rapidly advancing robotics technology since its inception almost a decade ago in 2004.

For example, in recent major conferences, an increasing number of workshops and special sessions have been offered (such as ICRA, IACAP, AISB and others).

There are also plans for further seminars, special sessions, and stand-alone locations.

Furthermore, an increasing number of publications, public lectures, and interviews by former and current TC co-chairs and other researchers invested in this topic are aimed at raising awareness of the urgent need for researchers and non-researchers alike to understand the social impact and ethical implications of robot technology.

The TC continues to promote public awareness and plans to arrange a standalone worldwide event on robot ethics in the near future, in addition to special sessions and seminars on robot ethics at important international venues.

Artificial Intelligence and Robotics Ethics.

Artificial intelligence (AI) and robots are digital technologies that will have a major influence on humanity's future progress in the near future.

They've highlighted basic concerns about what we should do with these systems, what they should do for us, what hazards they pose, and how we might manage them.

Context.

The ethics of AI and robots are often centered on different "concerns," which is a common reaction to new technology.

Many of these concerns turn out to be rather quaint (trains are too fast for souls); some are predictably wrong when they claim that technology will fundamentally change humans (telephones will destroy personal communication, writing will destroy memory, video cassettes will render going out obsolete); some are broadly correct but moderately relevant (digital technology will destroy industries that make photographic film, cassette tapes, or vinyl records); and some are egregiously wrong when they claim that technology will fundamentally change humans (telephones will destroy personal (cars will kill children and fundamentally change the landscape).

The purpose of a piece like this is to dissect the concerns and deflate the non-issues.

Some technologies, such as nuclear power, automobiles, and plastics, have sparked ethical and political debate as well as considerable regulatory initiatives to limit their trajectory, generally after some harm has been done.

New technologies, in addition to "ethical issues," challenge present norms and conceptual frameworks, which is of special interest to philosophy.

Finally, after we've grasped the context of a technology, we must create our society reaction, which includes regulation and legislation.

All of these characteristics are present in modern AI and robotics technologies, as well as the more basic worry that they will usher in the end of the period of human control on Earth.

In recent years, the ethics of AI and robotics have gotten a lot of press attention, which helps support related research but also risks undermining it: the press frequently talks as if the issues under discussion are just predictions of what future technology will bring, and as if we already know what would be most ethical and how to get there.

Risk, security (Brundage et al. 2018, see the Other Internet Resources section below, henceforth [OIR]), and effect prediction are therefore the focus of press attention (e.g., on the job market).

As a consequence, a discussion of mostly technical issues focuses on how to accomplish a desired result.

Image and public relations are also driving current policy and industrial debates, where the term "ethical" is nothing more than the new "green," maybe used for "ethics washing." In order for an issue to qualify as a dilemma for AI ethics, we must be unsure of what the proper way to do is.

Under this view, job loss, stealing, or death using AI are not ethical issues; the question is whether they are permitted in particular situations.

This article focuses on serious ethical issues for which we do not have immediate solutions.

Last but not least, AI and robotics ethics is a relatively new field within applied ethics, with significant dynamics but few well-established issues and authoritative overviews—though there is a promising outline (European Group on Ethics in Science and New Technologies 2018) and there are beginnings on societal impact (Floridi et al. 2018; Taddeo and Floridi 2018; S. Taylor et al. 2018; Walsh 2018; Bryson 2019; Gibert 2019; Whittlestone et (AI HLEG 2019 [OIR]; IEEE 2019).

As a result, this page must not only repeat what the community has already accomplished, but also offer an order where none exists.

Artificial Intelligence and Robotics

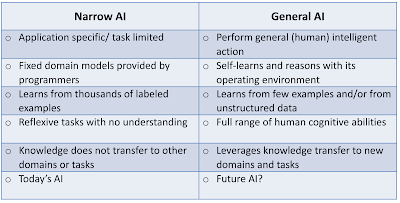

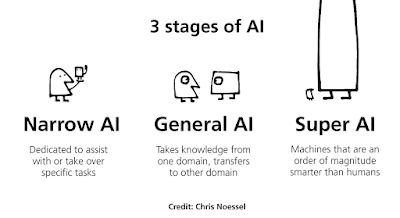

The term "artificial intelligence" (AI) refers to any kind of artificial computer system that exhibits intelligent behavior, i.e., complicated behavior that is conducive to achieving objectives.

We don't want to limit "intelligence" to what would need intelligence if performed by people, as Minsky proposed (1985).

This means we include a variety of machines, including "technical AI" computers that have limited learning and reasoning skills but excel at automating certain activities, as well as "general AI" machines that attempt to produce a generally intelligent agent.

As a result, the topic of "philosophy of AI" has emerged as a way for AI to reach closer to human skin than previous technologies.

Perhaps this is because AI's goal is to develop computers that have a trait that is important to how we humans understand ourselves: feelings, thoughts, and intelligence.

Sensing, modeling, planning, and action are probably the most important functions of an artificially intelligent agent, but current AI applications also include perception, text analysis, natural language processing (NLP), logical reasoning, game-playing, decision support systems, data analytics, predictive analytics, autonomous vehicles, and other forms of robotics (P. Stone et al. 2016).

To accomplish these goals, AI may use a variety of computing strategies, such as traditional symbol-manipulating AI inspired by natural cognition, or machine learning using neural networks (Goodfellow, Bengio, and Courville 2016; Silver et al. 2018).

It's worth mentioning that the word "AI" was widely used from 1950 to 1975, then fell out of favor during the "AI winter" of 1975–1995, and was restricted.

As a consequence, terms like "machine learning," "natural language processing," and "data science" were often omitted from the definition of "AI." Since about 2010, the definition has been expanded yet further, and "AI" now encompasses practically everything of computer science and even high-tech.

Now it's a household name, a booming industry with significant capital investment (Shoham et al. 2018), and it's on the verge of regaining popularity.

It may enable us to nearly eradicate global poverty, dramatically decrease sickness, and give better education to essentially everyone on the earth, as Erik Brynjolfsson pointed out.

(according to Anderson, Rainie, and Luchsinger, 2018) Robots, on the other hand, are physical machines that move.

While AI may be totally software, robots are physical machines that move.

Robots are exposed to physical force via "sensors," and they impose physical force on the environment through "actuators," such as a gripper or a rotating wheel.

As a result, self-driving automobiles or aircraft are robots, and only a small percentage of robots are "humanoid" (human-shaped), as shown in movies.

Some robots use artificial intelligence, whereas others do not: Typical industrial robots mindlessly execute scripts with limited sensory input and no learning or thinking (about 500,000 new industrial robots are deployed each year (IFR 2019 [OIR])).

While robotics systems are likely to generate more anxiety among the general public, AI systems are more likely to have a bigger influence on humans.

Furthermore, AI or robotics systems that are designed to do a certain set of tasks are less likely to introduce new challenges than systems that are more flexible and autonomous.

As a result, robotics and AI may be thought of as two overlapping sets of systems: AI-only systems, robotics-only systems, and systems that are both.

We're interested in all three, thus the scope of this essay isn't only the intersection of the two sets, but also their union.

Some Thoughts on AI Robotics Policy

One of the issues raised in this essay is policy.

There is a lot of public debate on AI ethics, and politicians often declare that the issue needs new policy, which is easier said than done: Technology policy is challenging to develop and implement in practice.

Incentives and financing, infrastructure, taxes, or good-will messages, as well as regulation by different parties and the law, are all examples.

AI policy may inadvertently collide with other goals of technology policy or general policy.

In recent years, governments, parliaments, organizations, and business circles in industrialized nations have published studies and white papers, and some have coined catchphrases ("trusted/responsible/humane/human-centered/good/beneficial AI"), but is it all that is required? See Jobin, Ienca, and Vayena (2019) for a survey, as well as V.

Müller's list of PT-AI Policy Documents and Institutions.

People working in ethics and policy may have a propensity to exaggerate the influence and risks posed by new technologies, while underestimating the scope of present regulation (e.g., for product liability).

Businesses, the military, and certain government agencies, on the other hand, have a propensity to "simply speak" and conduct some "ethical washing" in order to maintain a positive public image and go on as before.

Putting in place legally enforceable regulations would put established corporate structures and practices to the test.

Actual policy is not only an application of ethical theory; it is also influenced by society power structures, and those with power will resist any restrictions.

As a result, there's a good chance that regulation will be rendered useless in the face of economic and political power.

There have been several remarkable starts, despite the fact that virtually little real policy has been produced: The current EU policy statement says that "trustworthy AI" should be legal, ethical, and technically sound, and then lists seven criteria: human supervision, technological robustness, privacy and data governance, transparency, fairness, well-being, and accountability (AI HLEG 2019 [OIR]).

Much European research today operates under the banner of "responsible research and innovation," and "technology evaluation" has been a common area since nuclear power's inception.

In the subject of information technology, professional ethics is also a standard field, and this covers topics that are pertinent to this article.

Perhaps a "code of ethics" for AI developers, similar to medical practitioners' codes of ethics, is a possibility here (Véliz 2019).

In this article, we look at what data science should be doing (L. Taylor and Purtova 2019).

We also believe that, rather than the area as a whole, much regulation will ultimately address individual applications or technologies of AI and robots.

In, you'll find a handy overview of an ethical framework for AI (European Group on Ethics in Science and New Technologies 2018: 13ff).

Calo (2018), as well as Crawford and Calo (2016), Stahl, Timmermans, and Mittelstadt (2016), Johnson and Verdicchio (2017), and Giubilini and Savulescu (2017), discuss AI policy in general (2018).

In the discipline of "Science and Technology Studies," a more political perspective on technology is often emphasized (STS).

Concerns in STS are frequently fairly similar to those in ethics, as works like The Ethics of Invention (Jasanoff 2016) demonstrate (Jacobs et al. 2019 [OIR]).

Rather than discussing AI or robotics in general, we discuss policy for each type of issue separately in this article.

Human Use Of AI Robotics Has Ethical Issues.

We look at challenges that emerge with specific applications of AI and robotics systems that can be more or less autonomous in this part, which means we look at issues that arise with certain usage of the technologies that do not arise with others.

It's important to remember, too, that technological advancements will always make certain usage simpler and hence more common, while hindering others.

As a result, the design of technological objects has ethical implications for their usage (Houkes and Vermaas 2010; Verbeek 2011), therefore we need "responsible design" in this sector in addition to "responsible use." The emphasis on usage does not presume which ethical systems are most suited to addressing these difficulties; virtue ethics (Vallor 2017) may be more appropriate than consequentialist or value-based ethics (Floridi et al. 2018).

This section is also unaffected by the debate over whether AI systems have true "intelligence" or other mental properties: It would also apply if AI and robots were just seen as the present face of automation (see Müller forthcoming-b).

Surveillance & Privacy

There is a broader debate over privacy and surveillance in information technology (e.g., Macnish 2017; Roessler 2017), which primarily concerns access to private data and individually identifiable information.

"The right to be left alone," "information privacy," "privacy as a feature of personhood," "control over one's own information," and "the right to secrecy" are all well-known facets of privacy (Bennett and Raab 2006).

Surveillance by other state agents, businesses, and even individuals is now included in privacy studies, which previously focused on state surveillance by secret services.

Technology has advanced dramatically in recent decades, but regulation has lagged behind (though there is the Regulation (EU) 2016/679), resulting in a state of anarchy that is used by the most powerful parties, sometimes in plain sight, sometimes in secret.

The digital environment has substantially expanded: All data collection and storage is now digital, our lives are becoming more digital, the majority of digital data is now linked to a single Internet, and sensor technology is rapidly being used to create data on non-digital parts of our lives.

AI broadens the scope of intelligent data collecting as well as the scope of data analysis.

This applies to both broad monitoring of whole populations and traditional targeted surveillance.

Furthermore, most of the information is shared between agents for a charge.

Controlling who collects which data and who has access, on the other hand, is considerably more difficult in the digital world than it was in the analogue world of paper and phone conversations.

Many new AI technologies magnify already identified problems.

Face recognition in images and videos, for example, enables for identification and hence profiling and searching for people (Whittaker et al. 2018: 15ff).

This is followed by the use of additional identifying methods, such as "device fingerprinting," which are prevalent on the Internet (and occasionally stated in the "privacy policy").

As a consequence, "there is a disturbingly full image of ourselves in this immense ocean of data" (Smolan 2016: 1:01).

As a consequence, there's a controversy that hasn't gotten the attention it deserves.

Our "free" services are paid for by the data trail we leave behind—but we aren't notified about the data collecting or the worth of this new raw material, and we are pushed into leaving even more data.

The major data-collection aspect of the business for the "big 5" corporations (Amazon, Google/Alphabet, Microsoft, Apple, and Facebook) seems to be built on deceit, exploiting human vulnerabilities, promoting procrastination, inducing addiction, and manipulation (Harris 2016 [OIR]).

In this "surveillance economy," the major goal of social media, gaming, and much of the Internet is to acquire, keep, and direct attention—and therefore data supply.

"The Internet's economic model is surveillance" (Schneier 2015).

"Surveillance capitalism" is a term used to describe the surveillance and attention economy (Zuboff 2019).

It has resulted in several efforts to break free from these companies' hold, such as via "minimalism" (Newport 2019) and the open source movement, but it seems that today's people lack the degree of autonomy required to break free while continuing to live and work normally.

If "ownership" is the proper connection here, we have lost ownership of our data.

We have, in some ways, lost control of our data.

These systems often disclose truths about us that we prefer to keep hidden or are unaware of: they know more about us than we do.

Even just watching our online behavior provides information into our mental processes (Burr and Christianini 2019) and may be used to manipulate us (see below section 2.2).

As a result, calls for the protection of "derived data" have been made (Wachter and Mittelstadt 2019).

Harari questions about the long-term repercussions of AI in the last phrase of his best-selling book Homo Deus: What will happen to society, politics, and everyday life when non-conscious yet extremely intelligent algorithms know us better than we do? (462, 2016) Except for security patrols, robotic devices have not yet played a significant role in this sector, but that will change as they become more ubiquitous outside of industrial contexts.

They are destined to become part of the data-gathering machinery, alongside the "Internet of things," so-called "smart" systems (phone, TV, oven, lamp, virtual assistant, house,...), "smart city" (Sennett 2018), and "smart government." (Relative) anonymisation, access control (plus encryption), and other models where computation is carried out with fully or partially encrypted input data are now standard in data science (Stahl and Wright 2018); in the case of "differential privacy," this is done by adding calibrated noise to encrypt the output of queries (Dwork et al. 2006; Abowd 2017).

While more time and money are required, such solutions may help to avoid many of the privacy concerns.

Better privacy has also been considered by certain firms as a competitive advantage that can be exploited and sold for a profit.

One of the most challenging aspects of regulation is enforcing it, both at the state level and at the level of the person who has a claim.

They must locate a court that declares itself competent, identify the liable legal entity, prove the action, maybe establish purpose, and find a court that declares itself competent... and finally persuade the court to follow through on its judgment.

Consumer rights, product responsibility, and other civil liabilities, as well as protection of intellectual property rights, are often lacking or difficult to enforce with digital goods.

This implies that enterprises with a "digital" history are used to testing their goods on customers without fear of legal repercussions while vigorously maintaining their intellectual property rights.

This "Internet Libertarianism" is frequently misinterpreted as implying that technological innovations would solve social issues on their own (Mozorov 2013).

Manipulation of Behaviour is a term that refers to the act of manipulating someone's behavior.

The ethical difficulties raised by AI in surveillance extend beyond the collection of data and the focus of attention: They include the use of data to influence behavior, both online and offline, in a manner that impairs rational decision-making autonomy.

Of course, attempts to control behavior are nothing new, but when AI systems are used, they may take on a new dimension.

Users are subject to "nudges," manipulation, and deceit because of their intensive connection with data systems and the rich information about persons that this gives.

With enough past data, algorithms may be used to target people or small groups with precisely the kind of information that would most likely effect them.

A 'nudge' alters the environment in such a manner that it impacts behavior in a predictable, positive way that is simple and inexpensive to avoid (Thaler & Sunstein 2008).

From here, it's a short step to paternalism and manipulation.

Many advertisers, marketers, and internet vendors will use behavioural biases, deceit, and addiction development to maximize profit (Costa and Halpern 2019 [OIR]).

The economic strategy for most of the gambling and gaming businesses involves manipulation, but it is expanding to other sectors, such as low-cost airlines.

This manipulation is done using "dark patterns" in web page or gaming interface design (Mathur et al. 2019).

Gambling and the selling of addictive drugs are heavily regulated at the present, but online manipulation and addiction are not—despite the fact that manipulating online behavior is becoming a key Internet business model.

Furthermore, political propaganda is now primarily distributed through social media.

As in the Facebook-Cambridge Analytica "crisis" (Woolley and Howard 2017; Bradshaw, Neudert, and Howard 2019), this influence may be utilized to sway voting behavior, and if effective, it may jeopardize human liberty (Susser, Roessler, and Nissenbaum 2019).

Improved AI "faking" technologies turn what was previously dependable evidence into unreliable evidence—digital pictures, voice recordings, and video have all been affected.

It will be rather simple to produce (rather than change) "deep fake" text, images, and video material with any desired content in the near future.

Real-time engagement with people by text, phone, or video will soon be faked as well.

As a result, we can't trust digital connections while still becoming more reliant on them.

Another difficulty is that AI machine learning algorithms depend on large volumes of data for training.

As a result, there will often be a trade-off between privacy and data rights vs.

product technical excellence.

This has an impact on the consequentialist assessment of privacy-invading behaviors.

This field's policy has its ups and downs: Businesses' lobbying, secret services, and other governmental agencies that rely on monitoring are putting a lot of pressure on civil liberties and the preservation of individual rights.

In comparison to the pre-digital period, when communication was dependent on letters, analogue telephone conversations, and human interaction, and monitoring was subject to severe legal limits, privacy protection has deteriorated dramatically.

Despite the fact that the EU General Data Protection Regulation (Regulation (EU) 2016/679) has enhanced privacy protection, the US and China choose growth with fewer regulation (Thompson and Bremmer 2018), most likely in the expectation of gaining a competitive edge.

It is evident that, with the assistance of AI technology, state and corporate actors have expanded their power to breach privacy and influence individuals, and will continue to do so to serve their own interests—unless legislation in the public interest intervenes.

AI Systems' Transparency.

The primary difficulties in what is now referred to as "data ethics" or "big data ethics" are opacity and prejudice (Floridi and Taddeo 2016; Mittelstadt and Floridi 2016).

"Significant issues regarding a lack of due process, accountability, community participation, and auditing" are raised by AI systems for automated decision assistance and "predictive analytics" (Whittaker et al. 2018: 18ff).

They are part of a power structure that "creates decision-making procedures that restrict and limit human involvement" (Danaher 2016b: 245).

At the same time, the impacted individual will often be unable to understand how the system arrived at this result, i.e., the system will be "opaque" to that person.

Even an expert will struggle to understand how a certain pattern was detected, much alone what the pattern is, if the system uses machine learning.

This opacity exacerbates bias in decision systems and data sets.

So, at least in circumstances where there is a desire to eliminate bias, opacity and bias analysis go hand in hand, and political responses must address both challenges simultaneously.

Many AI systems depend on supervised, semi-supervised, or unsupervised machine learning methods in (simulated) neural networks to extract patterns from a given dataset, with or without "correct" answers supplied.

With these methods, the "learning" identifies patterns in the data and labels them in a manner that looks relevant to the choice the system makes, despite the fact that the programmer has no idea which patterns in the data the system has employed.

In reality, the algorithms are changing, so when fresh data or feedback ("this was accurate," "this was wrong") is received, the learning system's patterns alter.

This implies that the end result is opaque to the user and coders.

Furthermore, the program's quality is largely reliant on the data given, as the old adage goes, "garbage in, trash out." So, if the data previously has a bias (for example, police data on suspects' skin color), the algorithm will duplicate that bias.

There have been ideas for a common representation of datasets in the form of a "datasheet," which would make detecting bias more straightforward (Gebru et al. 2018 [OIR]).

There's also a lot of new research on the limits of machine learning systems, which are basically powerful data filters (Marcus 2018 [OIR]).

Some have contended that today's ethical issues are the consequence of AI's technological "shortcuts" (Cristianini forthcoming).

Starting with (Van Lent, Fisher, and Mancuso 1999; Lomas et al. 2012) and more recently, a DARPA initiative, numerous technological efforts aimed at "explainable AI" have been undertaken (Gunning 2017 [OIR]).

The requirement for a system for clarifying and articulating the power structures, biases, and impacts that computational artefacts exert in society is often referred to as "algorithmic accountability reporting" (Diakopoulos 2015: 398).

This isn't to say that we should expect an AI to "explain its reasoning"—doing so would need significantly greater moral autonomy than we now provide AI systems (see section 2.10).

If we rely on a system that is supposedly superior to humans but cannot explain its decisions, there is a fundamental problem for democratic decision-making, according to politician Henry Kissinger.

We may have "created a potentially dominant technology in search of a guiding philosophy," according to him (Kissinger 2018).

Danaher (2016b) refers to this issue as "the menace of algocracy" (adding to Aneesh 2002 [OIR], 2006's usage of the term "algocracy").

To prevent AI becoming a force that leads to a Kafka-style impenetrable suppression mechanism in public administration and elsewhere, Cave (2019) emphasizes the necessity for a larger social shift toward more "democratic" decision-making.

In her renowned book Weapons of Math Destruction (2016), O'Neil, as well as Yeung and Lodge, have emphasized the political aspect of this debate (2019).

Some of these concerns have been addressed in the EU with the (Regulation (EU) 2016/679), which stipulates that consumers would have a legal "right to explanation" when confronted with a choice based on data processing—how far this goes and to what extent it can be enforced is debatable (Goodman and Flaxman 2017; Wachter, Mittelstadt, and Floridi 2016; Wachter, Mittelstadt, and Russell 2017).

According to Zerilli et al.

(2019), there may be a double standard here, in which we require a high degree of explanation for machine-based judgments despite people not always meeting that threshold.

AI Bias.

Automated AI decision support systems and "predictive analytics" work with data to create a "output" judgment.

This output might be anything from "this restaurant matches your tastes" to "the patient in this X-ray has finished bone development," "credit card application refused," "donor organ will be donated to another patient," "bail is denied," or "target identified and engaged." Data analysis is often used in "predictive analytics" in business, healthcare, and other industries to forecast future events; as prediction becomes simpler, it will become a cheaper commodity.

Prediction is used in "predictive policing" (NIJ 2014 [OIR]), which many worry will erode civil rights (Ferguson 2017) since it takes authority away from those whose behavior is expected.

Many of the concerns about policing, however, seem to be based on future scenarios in which law enforcement anticipates and punishes planned activities rather than waiting until a crime is committed (as in the 2002 film "Minority Report").

One problem is that these systems may amplify bias already present in the data used to create the system, for as by boosting police patrols in a certain region and uncovering more crime in that area.

Actual "predictive policing" or "intelligence-led policing" tactics are primarily concerned with determining where and when police personnel will be most required.

In workflow support software (e.g., "ArcGIS"), police officers may also be given additional data, giving them greater power and allowing them to make better judgments.

The right amount of faith in the technical quality of these systems, as well as the appraisal of the police work's goals, determine if this is an issue.

"AI ethics in predictive policing: From threat models to an ethics of caring," according to a recent study title, may lead in the correct way (Asaro 2019).

When a person makes an unjust judgment due of a feature that is truly unrelated to the subject at hand, such as a prejudiced assumption about members of a group, bias is likely to emerge.

As a result, one kind of bias is a person's taught cognitive trait, which is frequently not made apparent.

The individual in question may not be conscious of their prejudice, and they may even be openly and publicly opposed to a bias that is discovered (e.g., through priming, cf. Graham and Lowery 2004).

Binns discusses fairness vs. bias in machine learning (2018).

Apart from the social phenomena of learnt prejudice, the human brain system is prone to a variety of "cognitive biases," such as the "confirmation bias," in which people perceive information as supporting their existing beliefs.

This second kind of prejudice is generally considered to impair rational judgment (Kahnemann 2011)—though certain cognitive biases, such as the efficient use of resources for intuitive judgment, may provide an evolutionary benefit.

It's debatable whether AI systems should or might exhibit cognitive bias.

When data contains systematic mistake, such as "statistical bias," a third kind of bias is present.

Strictly speaking, each given dataset will only be unbiased for a single kind of problem, therefore just creating one increases the risk that it will be utilized for a different type of issue and so be biased for that type.

On the basis of such data, machine learning will not only fail to recognize the prejudice, but also codify and automate the "historical bias." An automated recruitment screening system at Amazon (discontinued early 2017) was revealed to be biased against women, likely because the corporation has a history of discriminating against women in the employment process.

The "Correctional Offender Management Profiling for Alternative Sanctions" (COMPAS), a system for predicting whether a defendant will reoffend, was found to be as accurate (65.2 percent) as a group of random humans (Dressel and Farid 2018), with more false positives and fewer false negatives for black defendants.

As a result, the issue with such systems is prejudice, as well as people' undue faith in them.

Eubanks investigates the political aspects of such automated systems in the United States (2018).

There are substantial technological efforts underway to identify and eliminate bias from AI systems, but they are still in their infancy: see the UK Institute for Ethical AI & Machine Learning (Brownsword, Scotford, and Yeung 2017; Yeung and Lodge 2019).

Technical remedies tend to have limitations in that they need a mathematical definition of fairness, which is difficult to come by (Whittaker et al. 2018: 24ff; Selbst et al.

2019), as well as a formal notion of "race" (see Benthall and Haynes 2019).

A proposal for an institution has been submitted (Veale and Binns 2017).

Interaction between humans and robots.

Human-robot interaction (HRI) is a distinct academic discipline that today pays close attention to ethical issues, perception dynamics on both sides, and both the diversity of interests and the complexities of the social milieu, including co-working (e.g., Arnold and Scheutz 2017).

Calo, Froomkin, and Kerr (2016), Royakkers and van Est (2016), and Tzafestas (2016) are useful surveys for robotics ethics; Lin, Abney, and Jenkins is a common collection of studies (2017).

While AI may be used to persuade people to believe and act in certain ways (see section 2.2), it can also be used to control robots that are problematic if their methods or appearance are deceptive, endanger human dignity, or violate Kant's "respect for humanity" criterion.

Humans are quick to ascribe mental traits to things and empathize with them, particularly when the items' exterior appearance resembles that of live creatures.

This may be used to trick people (or animals) into giving robots or AI systems more intellectual or even emotional weight than they deserve.

Other aspects of humanoid robots (e.g., Hiroshi Ishiguro's remote-controlled Geminoids) are problematic in this sense, and some examples have been plainly fraudulent for public relations reasons (e.g., Hanson Robotics' "Sophia").

Of course, certain very fundamental corporate ethical and legal limits apply to robots as well, such as product safety and responsibility, or non-deception in advertising.

Many of the problems that have been mentioned seem to be addressed by the present limits.

However, there are several elements of human-human connection that seem to be uniquely human in ways that robots may not be able to replicate: compassion, love, and sex.

CareRobots

The employment of robots in human health care is presently limited to concept research in actual surroundings, but it might become a practical technology in a few years, raising fears of a dystopian future of dehumanized care (A. Sharkey and N. Sharkey 2011; Robert Sparrow 2016).

Robots that assist human caregivers (e.g., in lifting patients or transporting materials), robots that enable patients to do certain tasks on their own (e.g., eat with a robotic arm), and robots that are given to patients as companions and comfort (e.g., the "Paro" robot seal) are all examples of current systems.

See van Wynsberghe (2016), Nrskov (2017), Fosch-Villaronga and Albo-Canals (2019) for an overview, and Draper et al. (2019) for a survey of users (2014).

People have claimed that we will need robots in ageing societies, which is one reason why the topic of care has risen to the fore.

This argument is flawed because it assumes that as people live longer, they will need more care and that it will be impossible to recruit more people into caring professions.

It might also reveal an age prejudice (Jecker forthcoming).

Most crucially, it misses the essence of automation, which is about assisting people to work more effectively rather than replacing them.

It's not apparent if there's a problem here, given that the conversation largely centers on the fear of robots dehumanizing care, yet the actual and anticipated robots in care are helpful robots for traditional technical work automation.

They are therefore "care robots" solely in the sense that they execute activities in healthcare facilities, not in the sense that a human "cares" for the patients.

The effectiveness of "being cared for" seems to be dependent on this deliberate sensation of "care," which prospective robots cannot give.

The concern of robots in care isn't so much the lack of such purposeful care as it is the necessity for fewer human caregivers.

Surprisingly, caring for anything, even a virtual agent, may be beneficial to the caregiver (Lee et al. 2019).

Unless the deception is offset by a sufficiently high utility benefit, a system that purports to care would be misleading and hence problematic (Coeckelbergh 2016).

Some robots that pretend to "care" on a rudimentary level are already on the market (Paro seal), while others are under development.

To some degree, feeling cared for by a machine may be progress for certain people.

Sex Robots

Several tech optimists have stated that humans will be interested in sex and friendship with robots and will be comfortable with the notion (Levy 2007).

This seems extremely possible, given the diversity of human sexual tastes, including sex toys and sex dolls: The debate is whether such gadgets should be produced and marketed, and if there should be any restrictions in this sensitive field.

It seems to have just entered the mainstream of "robot philosophy" (Sullins 2012; Danaher and McArthur 2017; N. Sharkey et al. 2017 [OIR]; Bendel 2018; Devlin 2018) .

Humans have traditionally had strong emotional relationships to items, so maybe friendship or even love with a dependable android is appealing, particularly to those who have difficulty interacting with real people and already prefer dogs, cats, birds, computers, or tamagotchis.

Danaher (2019b) counters Nyholm and Frank (2017) by arguing that these may be real friendships and hence a worthwhile objective.

Even if it is shallow, it seems that a friendship might boost overall usefulness.

There is a problem of dishonesty in these conversations, since a robot cannot (at this time) mean what it says or have affections for a person.

Humans are renowned for attributing emotions and ideas to creatures that act as though they had sentience, even to plainly inanimate objects that display no behavior at all.

In addition, it seems that paying for deceit is an integral aspect of the conventional sex economy.

Finally, there are worries that have always accompanied sex issues, such as permission (Frank and Nyholm 2017), aesthetic considerations, and the fear that certain experiences would "corrupt" people.

Human behavior is shaped by experience, and pornography or sex robots are likely to encourage the idea of other people as simply objects of desire, or even recipients of abuse, and therefore destroy a deeper sexual and romantic experience.

The "Campaign Against Sex Robots" claims that these gadgets constitute a continuation of slavery and prostitution in this line (Richardson 2016).

Employment and Automation.

AI and robots, it seems, will result in large increases in productivity and consequently global prosperity.

Though the focus on "growth" is a recent phenomena, the desire to boost productivity has long been a characteristic of the economy (Harari 2016: 240).

Automation, on the other hand, often means that fewer individuals are needed to produce the same amount of output.

However, this does not always imply a reduction in total employment since accessible wealth rises, which might boost demand enough to offset productivity gains.

In the long term, increased productivity in industrial society has resulted in an increase in total wealth.

Historically, major labor market changes have occurred; for example, farming employed almost 60% of the workforce in Europe and North America in 1800, but just 5% in 2010 in the EU, and even less in the richest nations (European Commission 2013).

Between 1950 and 1970, the number of employed agricultural labourers in the United Kingdom fell by half (Zayed and Loft 2019).

Some of these disruptions result in more labor-intensive companies relocating to lower-cost locations.

This is a continuous procedure.

Digital automation, unlike physical machinery, substitutes human cognition or information processing (Bostrom and Yudkowsky 2014).

As a result, a more drastic shift in the labor market is possible.

So, the big concern is whether the impacts will be different this time.

Will the development of new jobs and wealth be able to keep up with the employment losses? And, even if it isn't, what are the transition expenses, and who is responsible for them? Do we need to undertake social changes to ensure that the costs and benefits of digital automation are distributed fairly? The fearful (Frey and Osborne 2013; Westlake 2014) to the neutral (Metcalf, Keller, and Boyd 2016 [OIR]; Calo 2018; Frey 2019) to the hopeful (Metcalf, Keller, and Boyd 2016 [OIR]; Calo 2018; Frey 2019).

(Brynjolfsson and McAfee 2016; Harari 2016; Danaher 2019a).

In principle, the effect of automation on the labor market appears to be fairly well understood as involving two channels:

I the nature of interactions between differently skilled workers and new technologies affecting labor demand, and (ii) the equilibrium effects of technological progress through subsequent changes in labor supply and product markets.

(Goos et al., 2018: 362) "Job polarisation" or the "dumbbell" form (Goos, Manning, and Salomons 2009) seems to be occurring in the labor market as a consequence of AI and robotics automation: High-skilled technical jobs are in high demand and well compensated, while low-skilled service jobs are in high demand but poorly compensated, but the majority of jobs in factories and offices, i.e., the majority of jobs, are under pressure and being eliminated because they are relatively predictable and most likely to be automated (Baldwin 2019).

Perhaps enormous productivity gains will allow the "age of leisure" to come to pass, as predicted by Keynes in 1930 (assuming a 1% annual growth rate).

Actually, we've already achieved the amount he predicted for 2030, but we're still working—consuming more and constructing ever higher organizational layers.

Harari describes how economic growth enabled mankind to overcome famine, sickness, and war—and now, via artificial intelligence, we want immortality and everlasting happiness, thus his moniker Homo Deus (Harari 2016: 75).

Unemployment is, in general, a question of how commodities in a community should be divided fairly.

A common belief is that distributive justice should be chosen logically from behind a "veil of ignorance" (Rawls 1971), that is, as if one had no idea what place in society one would be occupying (labourer or industrialist, etc.).

Rawls believed that the selected principles would then promote fundamental rights and a distribution that benefited the poorest members of society the most.

The AI economy seems to have three characteristics that make such justice unlikely:

- For starters, it works in a highly uncontrolled environment in which blame is sometimes difficult to assign.

- Second, it functions in marketplaces where monopolies form fast due to a "winner takes all" characteristic.

- Third, the digital service industries' "new economy" is founded on intangible assets, commonly known as "capitalism without money" (Haskel and Westlake 2017).

This implies that international digital firms that do not have a physical presence in a certain region are difficult to manage.

These three characteristics seem to indicate that if we leave wealth distribution to free market forces, the consequence will be a very unequal distribution: And this is a trend that we are currently seeing.

One fascinating subject that has gotten little attention is whether AI development is ecologically sustainable: AI systems, like other computer systems, generate trash that is difficult to recycle and require enormous amounts of energy, particularly when training machine learning systems (and even while "mining" cryptocurrencies).

It appears that some players in this space offload these costs to the general public.

Autonomous Systems

In the context of autonomous systems, there are numerous definitions of autonomy.

In philosophical disputes, where autonomy is the foundation for accountability and personality, a stronger concept is at play (Christman 2003 [2018]).

In this context, accountability implies autonomy, but not the other way around, therefore systems with varying degrees of technological autonomy may exist without generating responsibility concerns.

In robotics, the weaker, more technical concept of autonomy is relative and progressive: To some extent, a system is considered to be autonomous in terms of human control (Müller 2012).

Since autonomy also involves a power relationship: who is in charge and who is accountable, there is a connection to the difficulties of bias and opacity in AI.

In general, one concern is whether autonomous robots pose difficulties to which our current conceptual systems must adapt, or whether they just need technological changes.

To settle such difficulties, most nations have a complex system of civil and criminal responsibility.

Technical norms, such as those governing the safe use of machines in medical settings, will very certainly need to be revised.

For such safety-critical systems and "security applications," there is already a discipline called "verifiable AI." The IEEE (Institute of Electrical and Electronics Engineers) and the BSI (British Standards Institution) have published "standards," focusing on more technical issues such data security and transparency.

We look at two examples of autonomous systems: autonomous cars and autonomous weapons, which may be found on land, sea, under water, in the air, or in space.

Autonomous Vehicles

Autonomous vehicles have the potential to lessen the enormous harm that human driving now causes—roughly 1 million people are killed each year, many more are wounded, the environment is polluted, the land is coated with concrete and asphalt, cities are full of parked automobiles, and so on.

However, there seem to be concerns about how autonomous cars should act, as well as how responsibility and risk should be shared in the complex system in which they operate.

(There is also much dispute on how long it will take to build completely autonomous, or "level 5" automobiles (SAE International 2018).) In this context, there is considerable discussion of "trolley difficulties." Various challenges are described in the famous "trolley problems" (Thomson 1976; Woollard and Howard-Snyder 2016: part 2) The simplest form is a trolley train on a track that is headed straight towards five people and would kill them unless the train is redirected onto a side track, but that side track has one person on it who will be killed if the train takes it.

The example stems from a comment in (Foot 1967: 6) on a variety of dilemma scenarios in which the permitted and desired effects of an action diverge.

"Trolley dilemmas" aren't designed to be used to illustrate real ethical issues or to be addressed by making the "correct" decision.

Rather, they are thought experiments in which the agent's choice is arbitrarily limited to a small number of unique one-off alternatives and the agent possesses complete information.

The distinction between actively doing something vs. allowing something to happen, intended vs. acceptable effects, and consequentialist vs. alternative normative approaches are all investigated using these difficulties as a theoretical tool (Kamm 2016).

Many of the issues observed in real driving and autonomous driving have been reminiscent of this kind of issue (Lin 2016).

However, it's unlikely that a real driver or a self-driving vehicle would ever have to deal with trolley issues (but see Keeling 2020).

While autonomous car trolley issues have garnered a lot of media attention (Awad et al. 2018), they don't seem to add anything to ethical theory or autonomous vehicle programming.

The most prevalent ethical issues in driving, such as speeding, unsafe overtaking, failing to maintain a safe distance, and so on, are typical cases of personal gain vs. the collective good.

The great majority of them are covered under driver's license laws.

Programming the automobile to drive "by the laws" rather than "in the best interests of the passengers" or "to maximize utility" reduces the challenge to a basic problem of ethical machine programming (see section 2.9).

There are likely more discretionary politeness rules and fascinating concerns about when to violate the norms (Lin 2016), but this seems to be more of a matter of applying conventional considerations (rules vs.

usefulness) to the instance of autonomous cars.

In this arena, notable policy initiatives include the report (German Federal Ministry of Transport and Digital Infrastructure 2017), which emphasizes the importance of safety.

The tenth rule says In the case of automated and connected driving systems, responsibility for infrastructure, policy, and legal choices passes from the person to the producers and operators of the technical systems, as well as the entities responsible for infrastructure, policy, and legal decisions.

(See section 2.10.1 for further information.) The ensuing German and EU legislation on licensing autonomous driving are much more stringent than their American equivalents, where some corporations utilize "testing on customers" as a strategy—without the consumers' or potential victims' informed agreement.

Autonomous Weapons.

The concept of automated weaponry is not new: Instead of simple guided missiles or remotely piloted vehicles, for example, we might deploy fully autonomous land, sea, and air vehicles capable of complicated, long-range surveillance and strike operations.

(1) (DARPA 1983) At the time, this concept was mocked as "fantasy" (Dreyfus, Dreyfus, and Athanasiou 1986: ix), but it is now a reality, at least for more clearly recognizable targets (missiles, aircraft, ships, tanks, and so on), but not for human fighters.

The primary reasons against (lethal) autonomous weapon systems (AWS or LAWS) are that they encourage extrajudicial executions, remove accountability from people, and increase the likelihood of conflicts or killings—see Lin, Bekey, and Abney (2008: 73–86) for a thorough list of problems.

It seems that decreasing the barrier to using such systems (autonomous cars, "fire-and-forget" missiles, or drones carrying explosives) and lowering the risk of being held responsible will enhance their usage.

In traditional drone battles with remote controlled weaponry, the key imbalance remains where one side may kill with impunity and so has few reasons not to (e.g., US in Pakistan).

It's simple to envisage a tiny drone searching for, identifying, and killing a single person—or possibly a certain sort of human.

The Campaign to Stop Killer Robots and other activist organizations have brought forward examples like these.

Some appear to imply that autonomous weapons are, in fact, weapons..., and that weapons kill, but we continue to manufacture them in massive quantities.

In terms of accountability, autonomous weapons may make it more difficult to identify and prosecute the culpable agents—but this is unclear, given the digital records that may be kept, at least in conventional warfare.

The "retribution gap" is a term used to describe the difficulties of distributing punishment (Danaher 2016a).

Another concern is whether the use of autonomous weapons in conflict would make wars worse or better.

If robots lessen war crimes and crimes in war, the response is likely to be good, and this has been used as both a proponent and a detractor of these weapons (Arkin 2009; Müller 2016a) (Amoroso and Tamburrini 2018).

The major concern, according to some, is not the deployment of such weapons in traditional combat, but rather in asymmetric conflicts or by non-state actors, such as criminals.

Autonomous weapons are also claimed to be incompatible with International Humanitarian Law, which requires armed confrontation to adhere to the principles of distinction (between combatants and civilians), proportionality (of force), and military necessity (of force) (A. Sharkey 2019).

True, distinguishing between fighters and non-combatants is difficult, but distinguishing between civilian and military ships is simple—all this means is that such weapons should not be built or used if they violate Humanitarian Law.

Additional concerns have been expressed that being murdered by an autonomous weapon endangers human dignity, however even proponents of a ban on these weapons seem to dismiss these worries: There are other weapons and technology that jeopardize human dignity as well.

Given this, as well as the ambiguity in the idea, it is preferable to use a variety of concerns in opposition to AWS rather than relying just on human dignity.

(2019, A. Sharkey) The military instruction on weaponry has made much of keeping people "in the loop" or "on the loop"—these approaches of spelling out "meaningful control" are explored in (Santoni de Sio and van den Hoven 2018).

There have been talks concerning the problems of assigning blame for an autonomous weapon's deaths, and a "responsibility gap" has been proposed (e.g., Rob Sparrow 2007), implying that neither the person nor the machine can be held accountable.

On the other hand, we don't presume that someone is to blame for every occurrence; instead, the true problem may be risk allocation (Simpson and Müller 2016).

According to risk analysis (Hansson 2013), determining who is at risk, who is a possible benefit, and who makes the choices is critical (Hansson 2018: 1822–1824).

Machine Ethics

Machine ethics is the study of ethics for machines, or "ethical machines," as opposed to the human usage of machines as objects.

It's not always clear if this is meant to encompass all of AI ethics or just a portion of it (Floridi and Saunders 2004; Moor 2006; Anderson and Anderson 2011; Wallach and Asaro 2017).

It seems at times that the (dubious) conclusion is at work here: if robots operate in morally significant ways, then we need a machine ethics.

As a result, some people employ a wider definition: machine ethics is concerned with ensuring that robots' conduct toward humans, and maybe other machines, is morally acceptable.

Anderson and Anderson (2007), p. 15 This might involve simple product safety concerns, for example.

Other authors sound more ambitious, but they use a narrower definition: AI reasoning should be able to consider societal values, moral and ethical considerations; weigh the relative priorities of values held by different stakeholders in various multicultural contexts; explain its reasoning; and ensure transparency.

(Dignum, 2018, pp. 1–2) Some of the debate in machine ethics is predicated on the idea that robots may be ethical agents accountable for their acts, or "autonomous moral agents," in some way (see van Wynsberghe and Robbins 2019).

The fundamental concept of machine ethics is now making its way into practical robotics, where the premise that these machines are artificial moral actors in any meaningful sense is seldom made (Winfield et al. 2019).

It has been noted that a robot trained to obey ethical principles may readily be reprogrammed to follow immoral ones (Vanderelst and Winfield 2018).

Isaac Asimov notably studied the concept that machine ethics may take the shape of "rules," proposing "three laws of robotics" (Asimov 1942): First Law: A robot may not hurt a human being or enable a human being to come to harm via inactivity.

Second Law—Except when such commands clash with the First Law, a robot must follow human directions.

Third Law—A robot must defend its own existence as long as it does not contradict the First or Second Laws.

In a series of scenarios, Asimov demonstrated how, despite their hierarchical organization, conflicts between these three rules would make it difficult to apply them.

Weaker forms of "machine ethics" risk limiting "having an ethics" to ideas that would not ordinarily be deemed adequate (e.g., without "reflection" or even "activity"); stronger conceptions that advance towards artificial moral beings may describe a—currently—empty set.

Moral Agents Created by Machines.

If one considers machine ethics to be about moral agents in any meaningful way, these agents might be referred to as "artificial moral agents" with rights and obligations.

However, the debate over artificial creatures calls into question a number of fundamental ethical assumptions, and it may be quite helpful to comprehend these concepts in isolation from the human scenario (cf. Misselhorn 2020; Powers and Ganascia forthcoming).

Several writers use the term "artificial moral agent" in a less demanding meaning, drawing from the term "agent" in software engineering, where issues of duty and rights aren't a concern (Allen, Varner, and Zinser 2000).

Ethical impact agents (e.g., robot jockeys), implicit ethical agents (e.g., safe autopilot), explicit ethical agents (e.g., using formal methods to estimate utility), and full ethical agents (who "can make explicit ethical judgments and generally is competent to reasonably justify them," according to James Moor (2006)).

A complete ethical agent is an ordinary adult person.") Several approaches to achieving "explicit" or "full" ethical agents have been proposed, including programming it in (operational morality), "developing" the ethics itself (functional morality), and finally full-blown morality with full intelligence and sentience (full-blown morality with full intelligence and sentience) (Allen, Smit, and Wallach 2005; Moor 2006).

Because programmed agents, like neurons in the brain, are "capable without cognition," they are not often regarded "complete" agents (Dennett 2017; Hakli and Mäkelä 2019).

In various debates, the concept of a "moral patient" is brought up: Because ethical patients matter, ethical agents have obligations and ethical patients have rights.

Some creatures, such as basic animals that may feel pain but cannot make rational decisions, seem to be patients without being agents.

On the other hand, it is often assumed that all agents will be patients as well (e.g., in a Kantian framework).

Being a person is often seen to be what qualifies an entity as a responsible agent, someone who can carry out responsibilities and be the subject of ethical issues.

Personhood is usually a profound concept connected to phenomenal awareness, intention, and free will (Frankfurt 1971; Strawson 1998).

Torrance (2011) proposes that "artificial (or machine) ethics could be defined as designing machines that do things that, when done by humans, are indicative of the possession of 'ethical status' in those humans" (2011: 116)—which he defines as "ethical productivity and ethical receptivity" (2011: 117)—as his expressions for moral agents and patients.

Robots' Responsibilities

There is widespread agreement that accountability, liability, and the rule of law are fundamental requirements that must be upheld in the face of new technologies (European Group on Ethics in Science and New Technologies 2018, 18), but the question in the case of robots is how to do so and how responsibility should be distributed.

Will the robots be held responsible, liable, or accountable for their conduct if they act? Should the distribution of risk, rather than talks about accountability, take precedence? Traditional responsibility distribution already exists: a vehicle producer is responsible for the automobile's technical safety, a driver is responsible for driving, a technician is responsible for appropriate maintenance, and the government is responsible for the road's technical conditions, among other things.

Generally speaking The outcomes of AI-based choices or actions are often the consequence of several interactions involving numerous players, including designers, developers, users, software, and hardware.... Spread agency entails distributed accountability. 751 (Taddeo and Floridi 2018).

The manner in which this distribution occurs is not a problem unique to AI, but it takes on added significance in this context (Nyholm 2018a, 2018b).

Distributed control is often performed in traditional control engineering using a control hierarchy and control loops that span these hierarchies.

Rights for Robots

According to certain scholars, it should be carefully studied if modern robots need rights (Gunkel 2018a, 2018b; Danaher forthcoming; Turner 2019).

This stance seems to be based mostly on opponents' criticisms and the factual observation that robots and other non-humans are occasionally treated as though they had rights.

In this spirit, a "relational turn" has been proposed: If we treat robots as if they had rights, we may be better off not looking into whether they "actually" have (Coeckelbergh 2010, 2012, 2018).

This begs the issue of how far such anti-realism or quasi-realism may go, and what it means to declare in a human-centered perspective that "robots have rights" (Gerdes 2016).

Bryson, on the other hand, has said that robots should not have rights (Bryson 2010), albeit she acknowledges that this is a possibility (Gunkel and Bryson 2014).

The question of whether robots (or other AI systems) should be classified as "legal entities" or "legal people" is a different one.

While governments, firms, and organizations are "entities," they may have legal rights and obligations.

The European Parliament has discussed giving robots this status to deal with civil responsibility (EU Parliament 2016; Bertolini and Aiello 2018), but not criminal culpability, which is reserved for human beings.

It would also be feasible to give robots merely a subset of rights and responsibilities.

"Such legislative action would be ethically unnecessary and legally difficult," it has been said, since it would not promote the interests of humanity (Bryson, Diamantis, and Grant 2017: 273).

There has long been a debate in environmental ethics concerning the legal rights of natural things such as trees (C. D. Stone 1972).

It has also been suggested that the ethical justifications for constructing robots with rights, or artificial moral patients, in the future are dubious (van Wynsberghe and Robbins 2019).

Some writers have urged for a "moratorium on synthetic phenomenology" among the community of "artificial consciousness" researchers since producing such awareness would probably include ethical responsibility to a sentient entity, such as avoiding harming it or ending its life by switching it off (Bentley et al. 2018: 28f).

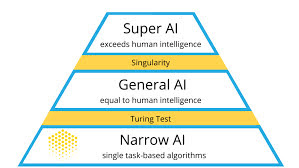

Singularity and Superintelligence

The goal of modern AI, according to some, is to create a "artificial general intelligence" (AGI), as opposed to a technical or "narrow" AI.

Traditional concepts of AI as a general-purpose system, as well as Searle's notion of "strong AI," in which computers with the correct instructions may physically comprehend and experience other cognitive states, are widely differentiated from AGI.

(Searle, 1980, pp. 417-420) The concept of singularity states that if artificial intelligence progresses to the point where systems have a human level of intellect, these systems will be able to construct AI systems that transcend human intelligence, i.e. they will be "superintelligent" (see below).

Such superintelligent AI systems would rapidly enhance themselves or evolve into ever more intelligent systems.

This abrupt change in circumstances after achieving superintelligent AI is known as the "singularity," a point at which AI development is beyond human control and difficult to anticipate (Kurzweil 2005: 487).

The dread that "the robots we built will take over the world" captivated human imagination long before computers (e.g., Butler 1863) and is the core topic of apek's renowned play (apek 1920), which popularized the term "robot." Irvin Good initially proposed this worry as a potential path for present AI to lead to a "intelligence explosion": Allow an ultraintelligent machine to be described as a machine capable of far surpassing all of a man's intellectual pursuits, regardless of how bright he is.

Because one of these intellectual activity is machine design, an ultraintelligent machine might create even better machines; there would undoubtedly be a "intelligence explosion," and man's intelligence would be far behind.

As a result, the first ultraintelligent machine is the final innovation that man will ever need to produce, assuming that the machine is docile enough to teach us how to keep it in check.

(Good 1965: 33) Kurzweil (1999, 2005, 2012) elucidates the optimistic argument from acceleration to singularity by stating that computing power has been increasing exponentially, i.e., doubling every 2 years since 1970 in accordance with "Moore's Law" on the number of transistors, and will continue to do so for some time in the future.

Kurzweil (1999) projected that supercomputers will approach human processing power by 2010, that "mind uploading" would be achievable by 2030, and that the "singularity" would occur by 2045.

Kurzweil mentions an increase in the amount of processing power that can be acquired for a given price, but the money accessible to AI startups have also risen dramatically in recent years: According to Amodei and Hernandez (2018 [OIR]), the real computational power available to train an AI system doubled every 3.4 months from 2012 to 2018, resulting in a 300,000x increase—not the 7x gain that doubling every two years would have produced.

A popular version of this argument (Chalmers 2010) speaks about a growth in the AI system's "intelligence" (rather than sheer processing capacity), but the critical point of "singularity" remains the moment at which AI systems take control and push AI development beyond human levels.

Bostrom (2014) goes into great length on what might happen at that time and the dangers it poses to mankind.

Eden et al. (2012), Armstrong (2014), and Shanahan (2015) summarize the topic (2015).

Additional than increasing computing power, there are other avenues to superintelligence, such as perfect computer simulation of the human brain (Kurzweil 2012; Sandberg 2013), biological paths, or networks and organizations (Bostrom 2014: 22–51).

Despite the apparent flaws in equating "intelligence" with processing capacity, Kurzweil seems to be correct in his assertion that people tend to underestimate the potential of exponential development.

Mini-test: How far would you travel in 30 steps if you walked in steps that were twice as long as the previous one, beginning with a one-metre step? (The answer is about three times the distance between the Earth's sole permanent natural satellite and the moon.) Indeed, most AI advancements may be attributed to the availability of processors that are orders of magnitude quicker, bigger storage, and increased funding (Müller 2018).

(Müller and Bostrom 2016; Bostrom, Dafoe, and Flynn forthcoming) address the real acceleration and its rates; Sandberg (2019) claims that development will continue for some time.

The participants in this argument are all technophiles in the sense that they anticipate technology to advance quickly and bring about a wide range of positive changes—but they are divided into two groups: those who concentrate on benefits (such as Kurzweil) and those who focus on hazards (e.g., Bostrom).

Both parties sympathize with "transhuman" beliefs of humankind's survival in a new physical form, such as being transferred into a computer (Moravec 1990, 1998; Bostrom 2003a, 2003c).

They also study the possibilities of "human improvement" in different areas, including as intellect (commonly referred to as "IA") (intelligence augmentation).

It's possible that future AI may be employed to improve human performance or lead to the collapse of the cleanly defined human single individual.

Robin Hanson offers a comprehensive analysis of what would happen economically if human "brain emulation" allows genuinely intelligent robots or "ems" to be created (Hanson 2016).