The limbic cortex is a region of the brain that neuroanatomists believe is the seat of emotion, addiction, mood, and a variety of other mental and emotional processes.

The limbic system's Amygdala, which is responsible for basic survival impulses like fear and aggressiveness, is also known as "The Lizard Brain" or "The Reptilian Brain."

- This is because a lizard's limbic system is its only source of brain function.

- The lizard brain is why you're scared, why you don't make all the art you can, why you don't ship when you can.

- The lizard brain is the root of the resistance.

- The lizard brain is famished, terrified, enraged, and horny.

- The lizard brain is primarily concerned with eating and staying safe.

Because status in the group is necessary for survival, the lizard brain is concerned with what others think.

The greatest line in Barbara Tuchman's 1961 book The Guns of August sums up the inability to plan for a lengthy World War I, among other mistakes:

"The inclination of everyone on both sides was not to prepare for the three tougher alternatives, not to act upon what they knew to be true." She's also discovered "Tuchman's rule," a historical occurrence that has been recognized as a psychological principle of "perceptual readiness" or "subjective probability":

Disasters are seldom as widespread as they seem in written tales. It seems continuous and widespread because it is in the record, but it was more likely irregular in both time and location. Furthermore, as we know from our own experience, the persistence of the normal is typically larger than the impact of the disruption.

After digesting today's news, one expects to be confronted with a society dominated by strikes, crimes, power outages, broken water mains, delayed trains, school closings, muggers, drug addicts, neo-Nazis, and rapists.

On a fortunate day, one may get home in the evening without having seen more than one or two of these occurrences. As a result, I developed Tuchman's Law, which states that "the fact of being reported increases the seeming magnitude of any terrible development by five to tenfold".

~ Barbara W. Tuchman

In other words, people prefer to read about spectacular and overblown occurrences, thus events are portrayed as widespread and widespread.

- In history and the news, the negative elements of events are often highlighted, while chroniclers frequently overlook the good sides of significant occurrences.

- Startup failures, for example, are widely publicized, while the achievements of the influential few are seldom covered or recorded in tiny type.

Many other cognitive bias situations, such as groupthink, fear of authority, lack of creativity, and hyper-rationality, have also been examined by psychologists.

- William Whyte coined the word "groupthink," and Irving Janis subsequently created the idea of groupthink to explain poor decision-making that may occur in groups as a consequence of factors that bring them together.

- The extreme fear of authority is classified as a type of social phobia by mental health professionals.

- The biggest adversary of truth, according to Albert Einstein, is blind obedience to authority.

- When something appears apparent to those in the know, foreseeable (especially in retrospect), and yet no preparation is made for the unfavorable result, it is called a failure of imagination.

- There is a lack of imagination if the person lacks the capacity or refuses to pull elements from past experiences and put them together to create an imagined scenario.

- A lack of constructive-episodic simulation has been linked to old age and the use of other kinds of memory recall.

Hyper-rationality is a defensive mechanism against anything that is dangerous or unsettling. It depicts circumstances in which reason has been pushed beyond its logical boundaries.

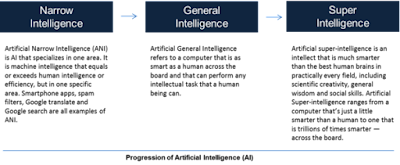

Artificial Intelligence (AI) on the other hand, offers a distinct perspective on independence: rational, transparent, ever-changing, relentless, and dispassionate.

- When applied to real-world circumstances, a range of methods using properly designed self-learning and AI technologies reduce or overcome cognitive biases.

- Depending on human-driven ethical norms, technology may bring both benefit and damage.

- If controlled by ethical norms and laws, AI stands a high possibility of becoming a basis for technology that overcomes human weakness.

- Biases of various kinds will have varying consequences, but they will always be detrimental.

- Fear of judgement, fear of failure, fear of the unknown, and fear of the irrational are the results of the four biases.

- This leads to people leaving, hiding, delaying, and freezing, none of which are desirable results for businesses or individuals.

This seems to be the most frequent observation of contemporary management, particularly with the focus on conflict of interest and fiduciary responsibilities.

Without any technical knowledge on the board or in management, it is virtually a given conclusion that the "correct" thing to do is to do nothing. However, AI has a lot to offer.

~ Jai Krishna Ponnappan

You may also want to read more about Artificial Intelligence here.