A group of academics released a meta-analysis of studies in 2019 indicating that a person's mood may be determined from their facial movements.

They came to the conclusion that there is no evidence that emotional state can be predicted from expression, regardless of whether the assessment is made by a person or by technology.

The coauthors noted, "[Facial expressions] in issue are not 'fingerprints' or diagnostic displays that dependably and explicitly convey distinct emotional states independent of context, person, or culture."

"It's impossible to deduce pleasure from a grin, anger from a scowl, or grief from a frown with certainty."

This statement may be disputed by Alan Cowen. He's the creator of Hume AI, a new research lab and "empathetic AI" firm coming from stealth today. He's an ex-Google scientist.

Hume claims to have created datasets and models that "react beneficially to [human] emotion signals," allowing clients ranging from huge tech firms to startups to recognize emotions based on a person's visual, vocal, and spoken expressions.

"When I first entered the area of emotion science, the majority of researchers were focusing on a small number of posed emotional expressions in the lab.

Cowen told, "I wanted to apply data science to study how individuals genuinely express emotion out in the world, spanning ethnicities and cultures."

"I uncovered a new universe of nuanced and complicated emotional behaviors that no one had ever recorded before using new computational approaches, and I was quickly publishing in the top journals." That's when businesses started contacting me."

Hume, which has 10 workers and just secured $5 million in investment, claims to train its emotion-recognizing algorithms using "huge, experimentally-controlled, culturally varied" datasets from individuals throughout North America, Africa, Asia, and South America.

Regardless of the data's representativeness, some experts doubt the premise that emotion-detecting algorithms have a scientific base.

"The kindest view I have is that there are some really well-intentioned folks who are naive enough that... the issue they're attempting to cure is caused by technology,"

~ Os Keyes, an AI ethics scientist at the University of Washington.

"Their first offering raises severe ethical concerns... It's evident that they aren't addressing the topic as a problem to be addressed, interacting deeply with it, and contemplating the potential that they aren't the first to conceive of it."

HireVue, Entropik Technology, Emteq, Neurodata Labs, Neilson-owned Innerscope, Realeyes, and Eyeris are among the businesses in the developing "emotional AI" sector.

Entropik says that their technology can interpret emotions "through facial expressions, eye gazing, speech tone, and brainwaves," which it sells to companies wishing to track the effectiveness of their marketing efforts.

Neurodata created a software that Russian bank Rosbank uses to assess the emotional state of clients phoning customer support centers.

Emotion AI is being funded by more than just startups.

Apple bought Emotient, a San Diego company that develops AI systems to assess face emotions, in 2016.

When Alexa senses irritation in a user's voice, it apologizes and asks for clarification.

Nuance, a speech recognition firm that Microsoft bought in April 2021, has shown off a device for automobiles that assesses driver emotions based on facial clues.

In May, Swedish business Smart Eye bought Affectiva, an MIT Media Lab spin-off that claimed it could identify rage or dissatisfaction in speech in 1.2 seconds.

According to Markets & Markets, the emotion AI market is expected to almost double in size from $19 billion in 2020 to $37.1 billion in 2026.

Hundreds of millions of dollars have been invested in firms like Affectiva, Realeyes, and Hume by venture investors eager to get in on the first floor.

According to the Financial Times, it is being used by film companies such as Disney and 20th Century Fox to gauge public response to new series and films.

Meanwhile, marketing organizations have been putting the technology to the test for customers like Coca-Cola and Intel to examine how audiences react to commercials.

The difficulty is that there are few – if any – universal indicators of emotion, which calls into doubt the accuracy of emotion AI.

The bulk of emotion AI businesses are based on psychologist Paul Ekman's seven basic emotions (joy, sorrow, surprise, fear, anger, disgust, and contempt), which he introduced in the early 1970s.

However, further study has validated the common sense assumption that individuals from diverse backgrounds express their emotions in quite different ways.

Context, conditioning, relationality, and culture all have an impact on how individuals react to situations.

For example, scowling, which is commonly linked with anger, has been observed to appear on the faces of furious persons fewer than 30% of the time.

In Malaysia, the apparently universal expression for fear is the stereotype for a threat or anger.

- Later, Ekman demonstrated that there are disparities in how American and Japanese pupils respond to violent films, with Japanese students adopting "a whole distinct set of emotions" if another person is around, especially an authority figure.

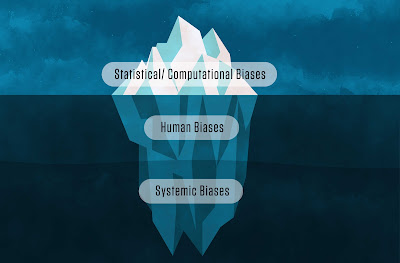

- Gender and racial biases in face analysis algorithms have been extensively established, and are caused by imbalances in the datasets used to train the algorithm.

In general, an AI system that has been trained on photographs of lighter-skinned humans may struggle with skin tones that are unknown to it.

This isn't the only kind of prejudice that exists.

Retorio, an AI employment tool, was seen to react differently to the identical applicant wearing glasses versus wearing a headscarf.

- Researchers from MIT, the Universitat Oberta de Catalunya in Barcelona, and the Universidad Autonoma de Madrid revealed in a 2020 study that algorithms may become biased toward specific facial expressions, such as smiling, lowering identification accuracy.

- Researchers from the University of Cambridge and the Middle East Technical University discovered that at least one of the public datasets often used to train emotion recognition systems was contaminated.

There are substantially more Caucasian faces in AI systems than Asian or Black ones.

- Recent study has shown that major vendors' emotional analysis programs assign more negative feelings to Black men's faces than white men's looks, highlighting the repercussions.

- Persons with impairments, disorders like autism, and people who communicate in various languages and dialects, such as African-American Vernacular English, all have different voices (AAVE).

- A native French speaker doing an English survey could hesitate or enunciate a word with considerable trepidation, which an AI system might misinterpret as an emotion signal.

Despite the faults in the technology, some businesses and governments are eager to use emotion AI to make high-stakes judgments.

Employers use it to assess prospective workers by giving them a score based on their empathy or emotional intelligence.

It's being used in schools to track pupils' participation in class — and even when they're doing homework at home.

Emotion AI has also been tried at border checkpoints in the United States, Hungary, Latvia, and Greece to detect "risk persons."

To reduce prejudice, Hume claims that "randomized studies" are used to collect "a vast variety" of facial and voice expressions from "people from a wide range of backgrounds."

According to Cowen, the company has gathered over 1.1 million images and videos of facial expressions from over 30,000 people in the United States, China, Venezuela, India, South Africa, and Ethiopia, as well as over 900,000 audio recordings of people voicing their emotions labeled with people's self-reported emotional experiences.

Hume's dataset is less than Affectiva's, which claimed to be the biggest of its sort at the time, with over 10 million people's expressions from 87 countries.

Cowen, on the other hand, says that Hume's data can be used to train models to assess "an exceptionally broad spectrum of emotions," including over 28 facial expressions and 25 verbal expressions.

"As demand for our empathetic AI models has grown, we've been prepared to provide access to them at a large scale."

As a result, we'll be establishing a developer platform that will provide developers and researchers API documentation and a playground," Hume added.

"We're also gathering data and developing training models for social interaction and conversational data, body language, and multi-modal expressions, which we expect will broaden our use cases and client base."

Beyond Mursion, Hume claims it's collaborating with Hoomano, a firm that develops software for "social robots" like Softbank Robotics' Pepper, to build digital assistants that make better suggestions by taking into consideration the emotions of users.

Hume also claims to have collaborated with Mount Sinai and University of California, San Francisco experts to investigate whether its models can detect depression and schizophrenia symptoms "that no prior methodologies have been able to capture."

"A person's emotions have a big impact on their conduct, including what they pay attention to and click on."

As a result, 'emotion AI' is already present in AI technologies such as search engines, social media algorithms, and recommendation systems. It's impossible to avoid.

As a result, decision-makers must be concerned about how these technologies interpret and react to emotional signals, influencing their users' well-being in ways that their inventors are unaware of." Cowen remarked.

"Hume AI provides the tools required to guarantee that technologies are built to increase the well-being of their users. There's no way of understanding how an AI system is interpreting these signals and altering people's emotions without means to assess them, and there's no way of designing the system to do so in a way that is compatible with people's well-being."

Leaving aside the thorny issue of using artificial intelligence to diagnose mental disorder, Mike Cook, a Queen Mary University of London AI researcher, believes the company's message is "performative" and the language is questionable.

"[T]hey've obviously gone to tremendous lengths to speak about diversity and inclusion and other such things, and I'm not going to whine about people creating datasets with greater geographic variety." "However, it seems a little like it was massaged by a PR person who knows how to make your organization appear to care," he remarked.

Cowen claims that by forming The Hume Initiative, a nonprofit "committed to governing empathetic AI," Hume is taking a more rigorous look at the uses of emotion AI than rivals.

The Hume Initiative, whose ethical committee includes Taniya Mishra, former director of AI at Affectiva, has established regulatory standards that the company claims it would follow when commercializing its innovations.

The Hume Initiative's principles forbid uses like manipulation, fraud, "optimizing for diminished well-being," and "unbounded" emotion AI.

It also establishes limitations for use cases such as platforms and interfaces, health and development, and education, such as mandating educators to utilize the output of an emotion AI model to provide constructive — but non-evaluative — input.

Danielle Krettek Cobb, the creator of the Google Empathy Lab, Dacher Keltner, a professor of psychology at UC Berkeley, and Ben Bland, the head of the IEEE group establishing standards for emotion AI, are coauthors of the recommendations.

"The Hume Initiative started by compiling a list of all known applications for empathetic AI.

After that, they voted on the first set of specific ethical principles.

The resultant principles are tangible and enforceable, unlike any prior attempt to AI ethics.

They describe how empathetic AI may be used to increase mankind's finest traits of belonging, compassion, and well-being, as well as how it might be used to expose humanity to intolerable dangers," Cowen remarked.

"Those who use Hume AI's data or AI models must agree to use them solely in accordance with The Hume Initiative's ethical rules, guaranteeing that any applications using our technology are intended to promote people's well-being." Companies have boasted about their internal AI ethical initiatives in the past, only to have such efforts fall by the wayside – or prove to be performative and ineffective.

Google's AI ethics board was notoriously disbanded barely one week after it was established.

Meta's (previously Facebook's) AI ethics unit has also been labeled as essentially useless in reports.

It's referred to as "ethical washing" by some.

Simply said, ethical washing is the practice of a firm inventing or inflating its interest in fair AI systems that benefit everyone.

When a firm touts "AI for good" activities on the one hand while selling surveillance technology to governments and companies on the other, this is a classic example for tech titans.

The coauthors of a report published by Trilateral Research, a London-based technology consultancy, claim that ethical principles and norms do not, by themselves, assist practitioners grapple with difficult concerns like fairness in emotion AI.

They argue that these should be thoroughly explored to ensure that businesses do not deploy systems that are incompatible with societal norms and values.

"Ethics is made ineffectual without a continual process of challenging what is or may be clear, of probing behind what seems to be resolved, of keeping this interrogation alive," they said.

"As a result, the establishment of ethics into established norms and principles comes to an end." Cook identifies problems in The Hume Initiative's stated rules, especially in its use of ambiguous terminology.

"A lot of the standards seem performatively written — if you believe manipulating the user is wrong, you'll read the guidelines and think to yourself, 'Yes, I won't do that.' And if you don't care, you'll read the rules and say, 'Yes, I can justify this,'" he explained.

Cowen believes Hume is "open[ing] the door to optimize AI for human and societal well-being" rather than short-term corporate objectives like user engagement.

"We don't have any actual competition since the other AI models for measuring emotional signals are so restricted." They concentrate on a small number of facial expressions, neglect the voice entirely, and have major demographic biases.

These biases are often weaved into the data used to train AI systems.

Furthermore, no other business has established explicit ethical criteria for the usage of empathetic AI," he said.

"We're building a platform that will consolidate our model deployment and provide customers greater choice over how their data is utilized."

Regardless of whether or not rules exist, politicians have already started to limit the use of emotion AI systems.

The New York City Council recently established a regulation mandating companies to notify applicants when they are being evaluated by AI, as well as to audit the algorithms once a year.

Candidates in Illinois must provide their agreement for video footage analysis, while Maryland has outlawed the use of face analysis entirely.

Some firms have voluntarily ceased supplying emotion AI services or erected barriers around them.

HireVue said that its algorithms will no longer use visual analysis.

Microsoft's sentiment-detecting Face API, which once claimed it could detect emotions across cultures, now says in a caveat that "facial expressions alone do not reflect people's interior moods."

The Hume Initiative, according to Cook, "developed some ethical papers so people don't worry about what [Hume] is doing."

"Perhaps the most serious problem I have is that I have no idea what they're doing." "Apart from whatever datasets they created, the part that's public doesn't appear to have anything on it," Cook added.

Emotion recognition using AI.

Emotion detection is a hot new field, with a slew of entrepreneurs marketing devices that promise to be able to read people's interior emotional states and AI academics attempting to increase computers' capacity to do so.

Voice analysis, body language analysis, gait analysis, eye tracking, and remote assessment of physiological indications such as pulse and respiration rates are used to do this.

The majority of the time, though, it's done by analyzing facial expressions.

However, a recent research reveals that these items are constructed on a foundation of intellectual sand.

The main issue is whether human emotions can be successfully predicted by looking at their faces.

"Whether facial expressions of emotion are universal, whether you can look at someone's face and read emotion in their face," Lisa Feldman Barrett, a professor of psychology at Northeastern University and an expert on emotion, told me, "is a topic of great contention that scientists have been debating for at least 100 years."

Despite this extensive history, she said that no full review of all emotion research conducted over the previous century had ever been completed.

So, a few years ago, the Association for Psychological Science gathered five eminent scientists from opposing viewpoints to undertake a "systematic evaluation of the data challenging the popular opinion" that emotion can be consistently predicted by outward facial movements.

According to Barrett, who was one of the five scientists, they "had extremely divergent theoretical ideas." "We arrived to the project with very different assumptions of what the data would reveal, and it was our responsibility to see if we could come to an agreement on what the data revealed and how to best interpret it." We weren't sure we could do it since it's such a divisive issue." The procedure, which was supposed to take a few months, took two years.

Nonetheless, after evaluating over 1,000 scientific studies in the psychology literature, these experts arrived to an united conclusion: "a person's emotional state may be simply determined from his or her facial expressions" has no scientific basis.

According to the researchers, there are three common misconceptions "about how emotions are communicated and interpreted in facial movements."

The relationship between facial expressions and emotions is neither dependable, particular, or generalizable (i.e., the same emotions are not always exhibited in the same manner) (the effects of different cultures and contexts has not been sufficiently documented).

"A scowling face may or may not be an indication of rage," Barrett said to me.

People frown in rage at times, and you could grin, weep, or simply seethe with a neutral look at other moments.

People grimace at other times as well, such as when they're perplexed, concentrating, or having gas." These results do not suggest that individuals move their faces at random or that [facial expressions] have no psychological significance, according to the researchers.

Instead, they show that the facial configurations in issue aren't "fingerprints" or diagnostic displays that consistently and explicitly convey various emotional states independent of context, person, or culture.

It's impossible to deduce pleasure from a grin, anger from a scowl, or sorrow from a frown, as most of today's technology seeks to accomplish when applying what are incorrectly considered to be scientific principles.

Because an entire industry of automated putative emotion-reading devices is rapidly growing, this work is relevant.

The market for emotion detection software is expected to reach at least $3.8 billion by 2025, according to our recent research on "Robot Surveillance."

Emotion detection (also known as "affect recognition" or "affective computing") is already being used in devices for marketing, robotics, driving safety, and audio "aggression detectors," as we recently reported.

Emotion identification is built on the same fundamental concept as polygraphs, or "lie detectors": that a person's internal mental state can be accurately associated with physical bodily motions and situations.

They can't — and this includes face muscles in particular.

It seems to reason that what is true of facial muscles would also be true of all other techniques of detecting emotion, such as body language and gait.

However, the assumption that such mind reading is conceivable might cause serious damage.

A jury's cultural misunderstanding of what a foreign defendant's facial expressions mean, for example, can lead to a death sentence rather than a prison sentence.

When such mindset is translated into automated systems, it may lead to further problems.

For example, a "smart" body camera that incorrectly informs a police officer that someone is hostile and angry might lead to an unnecessary shooting.

"There is no automatic emotion identification.

The top algorithms can confront a face — full frontal, no occlusions, optimal illumination — and are excellent at recognizing facial movements.

They aren't able, however, to deduce what those facial gestures signify."

~ Jai Krishna Ponnappan

You may also want to read more about Artificial Intelligence here.

See Also:

AI Emotions, AI Emotion Recognition, AI Emotional Intelligence, Surveillance Technologies, Privacy and Technology, AI Bias, Human Rights.

Download PDF: