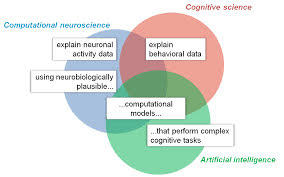

Computational

neuroscience (CNS) is a branch of neuroscience that uses the notion of

computing to the study of the brain.

Eric Schwartz coined the phrase "computational

neuroscience" in 1985 to replace the words "neural modeling" and

"brain theory," which were previously used to describe different

forms of nervous system study.

The concept that nervous system effects may be perceived as

examples of computations, since state transitions can be explained as relations

between abstract attributes, is at the heart of CNS.

In other words, explanations of effects in neurological

systems are descriptions of information changed, stored, and represented,

rather than casual descriptions of interaction of physically distinct elements.

As a result, CNS aims to develop computational models to

better understand how the nervous system works in terms of the information

processing characteristics of the brain's parts.

Constructing a model of how interacting neurons might build

basic components of cognition is one example.

A brain map, on the other hand, does not disclose the

nervous system's computing process, but it may be utilized as a restriction for

theoretical models.

Information sharing, for example, has costs in terms of

physical connections between communicating areas, in that locations that make

connections often (in cases of high bandwidth and low latency) would be

clustered together.

The description of neural systems as carry-on computations

is central to computational neuroscience, and it contradicts the claim that

computational constructs are exclusive to the explanatory framework of

psychology; that is, human cognitive capacities can be constructed and

confirmed independently of how they are implemented in the nervous system.

For example, when it became clear in 1973 that cognitive

processes could not be understood by analyzing the results of one-dimensional

questions/scenarios, a popular approach in cognitive psychology at the time,

Allen Newell argued that only synthesis with computer simulation could reveal

the complex interactions of the proposed component's mechanism and whether the

proposed component's mechanism was correct.

David Marr (1945–1980) proposed the first computational

neuroscience framework.

This framework tries to give a conceptual starting point for

thinking about levels in the context of computing by nervous structure.

It reflects the three-level structure utilized in computer

science (abstract issue analysis, algorithm, and physical implementation).

The model, however, has drawbacks since it is made up of

three poorly linked layers and uses a rigid top-down approach that ignores all

neurobiological facts as instances at the implementation level.

As a result, certain events are thought to be explicable on

just one or two levels.

As a result, the Marr levels framework does not correspond

to the levels of nervous system structure (molecules, synapses, neurons,

nuclei, circuits, networks layers, maps, and systems), nor does it explain

nervous system emergent type features.

Computational neuroscience takes a bottom-up approach,

beginning with neurons and illustrating how computational functions and their

implementations with neurons result in dynamic interactions between neurons.

Models of connectivity and dynamics, decoding models, and

representational models are the three kinds of models that try to get

computational understanding from brain-activity data.

The correlation matrix, which displays pairwise functional

connectivity between places and establishes the features of related areas, is

used in connection models.

Because they are generative models, they can generate data

at the level of the measurements and are models of brain dynamics, analyses of

effective connectivity and large-scale brain dynamics go beyond generic

statistical models that are linear models used in action and information-based

brain mapping.

The goal of the decoding models is to figure out what

information is stored in each brain area.

When an area is designated as a "knowledge

representing" one, its data becomes a functional entity that informs

regions that receive these signals about the content.

In the simplest scenario, decoding identifies which of the

two stimuli elicited a recorded response pattern.

The representation's content might be the sensory stimulus's

identity, a stimulus feature (such as orientation), or an abstract variable

required for a cognitive operation or action.

Decoding and multivariate pattern analysis were utilized to

determine the components that must be included in the brain computational

model.

Decoding, on the other hand, does not provide models for

brain computing; rather, it discloses some elements without requiring brain

calculation.

Because they strive to characterize areas' reactions to

arbitrary stimuli, representation models go beyond decoding.

Encoding models, pattern component models, and

representational similarity analysis are three forms of representational model

analysis that have been presented.

All three studies are based on multivariate descriptions of

the experimental circumstances and test assumptions about representational

space.

In encoding models, the activity profile of each voxel

across stimuli is predicted as a linear combination of the model's properties.

The distribution of the activity profiles that define the

representational space is treated as a multivariate normal distribution in

pattern component models.

The representational space is defined by the representational

dissimilarities of the activity patterns evoked by the stimuli in

representational similarity analysis.

The qualities that indicate how the information processing

cognitive function could operate are not tested in the brain models.

Task performance models are used to describe cognitive

processes in terms of algorithms.

These models are put to the test using experimental data

and, in certain cases, data from brain activity.

Neural network models and cognitive models are the two basic

types of models.

Models of neural networks are created using varying degrees

of biological information, ranging from neurons to maps.

Multiple steps of linear-nonlinear signal modification are

supported by neural networks, which embody the parallel distributed processing

paradigm.

To enhance job performance, models often incorporate

millions of parameters (connection weights).

Simple models will not be able to describe complex cognitive

processes, hence a high number of parameters is required.

The implementations of deep convolutional neural network

models have been used to predict brain representations of new pictures in the

ventral visual stream of primates.

The representations in the first few layers of neural

networks are comparable to those in the early visual cortex.

Higher layers are similar to the inferior temporal cortical

representation in that they both allow for the decoding of object location,

size, and posture, as well as the object's categorization.

Various research have shown that deep convolutional neural

networks' internal representations provide the best current models of visual

picture representations in the inferior temporal cortex in humans and animals.

When a wide number of models were compared, those that were

optimized for object categorization described the cortical representation the

best.

Cognitive models are artificial intelligence applications in

computational neuroscience that target information processing that do not

include any neurological components (neurons, axons, etc.).

Production systems, reinforcement learning, and Bayesian

cognitive models are the three kinds of models.

They use logic and predicates, and they work with symbols

rather than signals.

There are various advantages of employing artificial

intelligence in computational neuroscience research.

- First, although a vast quantity of information on the brain has accumulated through time, the true knowledge of how the brain functions remains unknown.

- Second, there are embedded effects created by networks of neurons, but how these networks of neurons operate is yet unknown.

- Third, although the brain has been crudely mapped, as has understanding of what distinct brain areas (mostly sensory and motor functions) perform, a precise map is still lacking.

Furthermore, some of the information gathered via

experiments or observations may be useless; the link between synaptic learning

principles and computing is mostly unclear.

The models of a production system are the first models for

explaining reasoning and problem resolution.

A "production" is a cognitive activity that occurs

as a consequence of the "if-then" rule, in which "if"

defines the set of circumstances under which the range of productions

("then" clause) may be carried out.

When the prerequisites for numerous rules are satisfied, the

model uses a conflict resolution algorithm to choose the best production.

The production models provide a sequence of predictions that

seem like a conscious stream of brain activity.

The same approach is now being used to predict the regional

mean fMRI (functional Magnetic Resonance Imaging) activation time in new

applications.

Reinforcement models are used in a variety of areas to

simulate the accomplishment of optimum decision-making.

The implementation in neurobiological systems is a basal

ganglia in neurobiochemical systems.

The agent might learn a "value function" that

links each state to the predicted total reward.

The agent may pick the most promising action if it can

forecast which state each action will lead to and understands the values of

those states.

The agent could additionally pick up a "policy"

that links each state to promised actions.

Exploitation (which provides immediate gratification) and

exploration must be balanced (which benefits learning and brings long-term

reward).

The Bayesian models show what the brain should really

calculate in order to perform at its best.

These models enable inductive inference, which is beyond the

capability of neural network models and requires previous knowledge.

The models have been used to explain cognitive biases as the

result of past beliefs, as well as to comprehend fundamental sensory and motor

processes.

The representation of the probability distribution of

neurons, for example, has been investigated theoretically using Bayesian models

and compared to actual evidence.

These practices illustrate that connecting Bayesian

inference to real brain implementation is still difficult since the brain

"cuts corners" in trying to be efficient, therefore approximations

may explain departures from statistical optimality.

The concept of a brain doing computations is central to

computational neuroscience, so researchers are using modeling and analysis of

information processing properties of nervous system elements to try to figure

out how complex brain functions work.

~ Jai Krishna Ponnappan

You may also want to read more about Artificial Intelligence here.

See also:

Bayesian Inference; Cognitive Computing.

Further Reading

Kaplan, David M. 2011. “Explanation and Description in Computational Neuroscience.” Synthese 183, no. 3: 339–73.

Kriegeskorte, Nikolaus, and Pamela K. Douglas. 2018. “Cognitive Computational Neuroscience.” Nature Neuroscience 21, no. 9: 1148–60.

Schwartz, Eric L., ed. 1993. Computational Neuroscience. Cambridge, MA: Massachusetts Institute of Technology.

Trappenberg, Thomas. 2009. Fundamentals of Computational Neuroscience. New York: Oxford University Press.