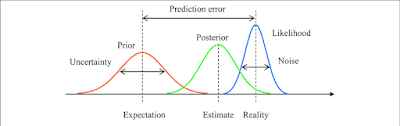

Bayesian inference is a method of calculating the likelihood

of a proposition's validity based on a previous estimate of its likelihood plus

any new and relevant facts.

In the twentieth century, Bayes' Theorem, from which

Bayesian statistics are derived, was a prominent mathematical technique

employed in expert systems.

The Bayesian theorem has been used to issues such as robot

locomotion, weather forecasting, juri metry (the application of quantitative

approaches to legislation), phylogenetics (the evolutionary links among

animals), and pattern recognition in the twenty-first century.

It's also used in email spam filters and can be used to solve

the famous Monty Hall issue.

The mathematical theorem was derived by Reverend Thomas

Bayes (1702–1761) of England and published posthumously in the Philosophical

Transactions of the Royal Society of London in 1763 as "An Essay Towards

Solving a Problem in the Doctrine of Chances." Bayes' Theorem of Inverse

Probabilities is another name for it.

A classic article titled "Reasoning Foundations of

Medical Diagnosis," written by George Washington University electrical

engineer Robert Ledley and Rochester School of Medicine radiologist Lee Lusted

and published by Science in 1959, was the first notable discussion of Bayes'

Theorem as applied to the field of medical artificial intelligence.

Medical information in the mid-twentieth century was

frequently given as symptoms connected with an illness, rather than diseases

associated with a symptom, as Lusted subsequently recalled.

They came up with the notion of expressing medical knowledge

as the likelihood of a disease given the patient's symptoms using Bayesian

reasoning.

Bayesian statistics are conditional, allowing one to

determine the likelihood that a specific disease is present based on a specific

symptom, but only with prior knowledge of how frequently the disease and

symptom are correlated, as well as how frequently the symptom is present in the

absence of the disease.

It's pretty similar to what Alan Turing called the

evidence-based element in support of the hypothesis.

The symptom-disease complex, which involves several symptoms

in a patient, may also be resolved using Bayes' Theorem.

In computer-aided diagnosis, Bayesian statistics analyzes

the likelihood of each illness manifesting in a population with the chance of

each symptom manifesting given each disease to determine the probability of all

possible diseases given each patient's symptom-disease complex.

All induction, according to Bayes' Theorem, is statistical.

In 1960, the theory was used to generate the posterior

probability of certain illnesses for the first time.

In that year, University of Utah cardiologist Homer Warner,

Jr.

used Bayesian statistics to detect well-defined congenital

heart problems at Salt Lake's Latter-Day Saints Hospital, thanks to his access

to a Burroughs 205 digital computer.

The theory was used by Warner and his team to calculate the

chances that an undiscovered patient having identifiable symptoms, signs, or

laboratory data would fall into previously recognized illness categories.

As additional information became available, the computer

software could be employed again and again, creating or rating diagnoses via

serial observation.

The Burroughs computer outperformed any professional

cardiologist in applying Bayesian conditional-probability algorithms to a

symptom-disease matrix of thirty-five cardiac diseases, according to Warner.

John Overall, Clyde Williams, and Lawrence Fitzgerald for

thyroid problems; Charles Nugent for Cushing's illness; Gwilym Lodwick for

primary bone tumors; Martin Lipkin for hematological diseases; and Tim de

Dombal for acute abdominal discomfort were among the early supporters of

Bayesian estimation.

In the previous half-century, the Bayesian model has been

expanded and changed several times to account for or compensate for sequential

diagnosis and conditional independence, as well as to weight other elements.

Poor prediction of rare diseases, insufficient

discrimination between diseases with similar symptom complexes, inability to

quantify qualitative evidence, troubling conditional dependence between

evidence and hypotheses, and the enormous amount of manual labor required to

maintain the requisite joint probability distribution tables are all criticisms

leveled at Bayesian computer-aided diagnosis.

Outside of the populations for which they were intended,

Bayesian diagnostic helpers have been chastised for their shortcomings.

When rule-based decision support algorithms became more

prominent in the mid-1970s, the application of Bayesian statistics in

differential diagnosis reached a low.

In the 1980s, Bayesian approaches resurfaced and are now

extensively employed in the area of machine learning.

From the concept of Bayesian inference, artificial

intelligence researchers have developed robust techniques for supervised

learning, hidden Markov models, and mixed approaches for unsupervised learning.

Bayesian inference has been controversially utilized in

artificial intelligence algorithms that aim to calculate the conditional chance

of a crime being committed, to screen welfare recipients for drug use, and to

identify prospective mass shooters and terrorists in the real world.

The method has come under fire once again, especially when

screening includes infrequent or severe incidents, where the AI system might

act arbitrarily and flag too many people as being at danger of partaking in the

unwanted behavior.

In the United Kingdom, Bayesian inference has also been used

into the courtroom.

The defense team in Regina v.

Adams (1996) offered jurors the Bayesian approach to aid

them in forming an unbiased mechanism for combining introduced evidence, which

included a DNA profile and varying match probability calculations, as well as

constructing a personal threshold for convicting the accused "beyond a

reasonable doubt." Before Ledley, Lusted, and Warner revived Bayes'

theorem in the 1950s, it had previously been "rediscovered" multiple times.

Pierre-Simon Laplace, the Marquis de Condorcet, and George

Boole were among the historical figures who saw merit in the Bayesian approach

to probability.

The Monty Hall dilemma, named after the presenter of the

famous game show Let's Make a Deal, involves a contestant selecting whether to

continue with the door they've chosen or swap to another unopened door when

Monty Hall (who knows where the reward is) opens one to reveal a goat.

Switching doors, contrary to popular belief, doubles your

odds of winning under conditional probability.

~ Jai Krishna Ponnappan

You may also want to read more about Artificial Intelligence here.